Virtual Reality (VR) has gained a lot of momentum recently which has called standards development organizations (SDOs) into action including the Moving Picture Experts Group (MPEG). In this post we’d like to highlight the current status of this development, what MPEG is doing and what else is going on in this (not so) new and exciting domain.

Before going into details, let’s have a brief look at the current state of the art and device landscape respectively. Oculus Rift, Playstation VR, Google Cardboard, Samsung Gear VR, HTC Vive and others are existing products and technologies enabling immersive VR experiences. In this context, Virtual Reality (VR) is “a realistic and immersive simulation of a three-dimensional environment, created user interactive software and hardware that is experienced or controlled by movement of the body”. While VR is primarily designed and implemented using both hardware and software components, 360 degree video is an actual recording of the real life. Such recordings can be generated with specialized omnidirectional cameras and rigs at (relatively) low costs (e.g., GoPro/VideoStitch, Giroptic and Orah) making it easy to produce such user-generated content.

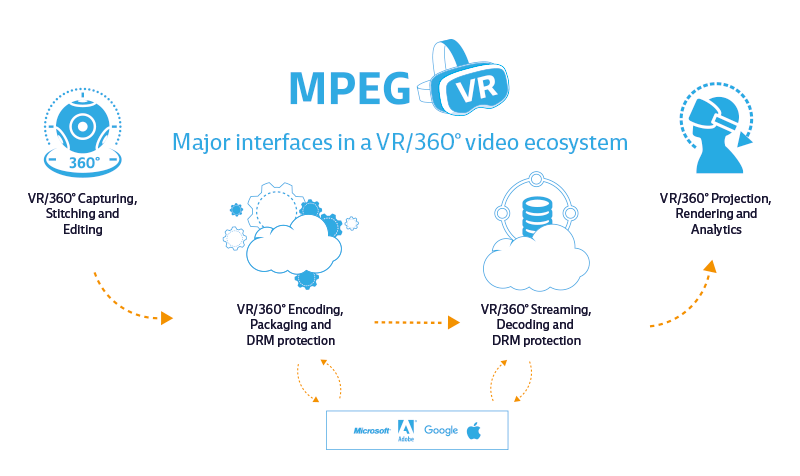

In 2016, MPEG started working on an initiative referred to as MPEG-VR (see my report from the 115th meeting) to develop a roadmap and coordinate the various activities related to VR within MPEG and to liaison also with other SDOs. The major interfaces of such an ecosystem are shown in the figure below comprising (i) capturing, stitching, and editing, (ii) encoding, packaging, and (optionally) DRM protection, (iii) streaming, decoding, and (optionally) DRM protection, and (iv) projection, rendering, and analytics.

As a first step, MPEG reviewed its internal efforts related to VR resulting in a long list of activities: 360 degree 2D/3D video coding (incl. related audio extensions), Free Viewpoint Television, point cloud, lightfield, 3D Audio AR/VR extensions, audio wave field coding, Codec Independent Code Points (CICP), MPEG-V media context and control, media orchestration, scene description and application engine, DASH, ISO base media file format, Omnidirectional Media Application Format (OMAF), and media wearable.

In order to get feedback from VR/360 enthusiasts at large, MPEG published a survey running over several months to gather as many inputs as possible regarding state-of-the-art and possible future issues. Some preliminary results of this survey have been presented at IBC 2016 and the final results will be evaluated during the 116th MPEG meeting in Chengdu where also further steps will be discussed.

In any way, MPEG-VR will most likely result in many different outputs from probably all MPEG subgroups (e.g., systems, audio, video, 3DGC, and JCTs) and it is expected that coordination among these various activities is performed at the plenary and joint meetings (during MPEG meetings) and an Ad-Hoc Group (in between MPEG meetings; email reflector). A first standard where results from this activity will appear on the horizon will be the Omnidirectional Media Application Format (OMAF) that is currently drafted to address immediate market needs but it is also acknowledged that this will only be a first step in probably a long and stony path ahead.

Bitmovin is providing innovative solutions for VR and 360 degree video streaming and, thus, is committed to support and also contribute to MPEG-VR in the future in order to provide interoperability with its solutions.

Other consortia/SDOs working in this domain are W3C (hosting a workshop at the same time as the MPEG meeting), 3GPP (26.918, Title: Virtual Reality (VR) media services over 3GPP), DVB (started a study mission on this topic), DASH-IF (hosted a workshop in May 2016 related to “Streaming Virtual Reality with DASH”), QUALINET (task forces created related to VR and immersive experiences), etc. which all have a vital liaison with MPEG but only the future will show which standard will actually foster innovative products and services. An important aspect, not yet fully addressed (and probably not well understood), is the Quality of Experience (QoE) of such applications and services, but this is another story…

Related Posts

Deploying Scene-Level AI in VOD Workflows to Improve Ad Targeting, Discovery, and Experiences

AI Contextual Advertising: A New Era for Viewer-Centric Ads