TL;DR

- Most observability dashboards mix real failures with recoverable events, making error metrics harder to trust and act on.

- New CRITICAL and INFO severity levels separate genuine playback failures from informational events, keeping retry errors visible without polluting error metrics.

- Session impact is now quantified. A new “Percentage of Sessions with Errors” metric shows how many playback sessions were actually affected, giving context that raw error counts can’t.

- Longer stack traces (up to 100 rows), expanded iOS ErrorDetails, OS major version filtering, and WebOS model and version detection make platform-specific root cause analysis faster.

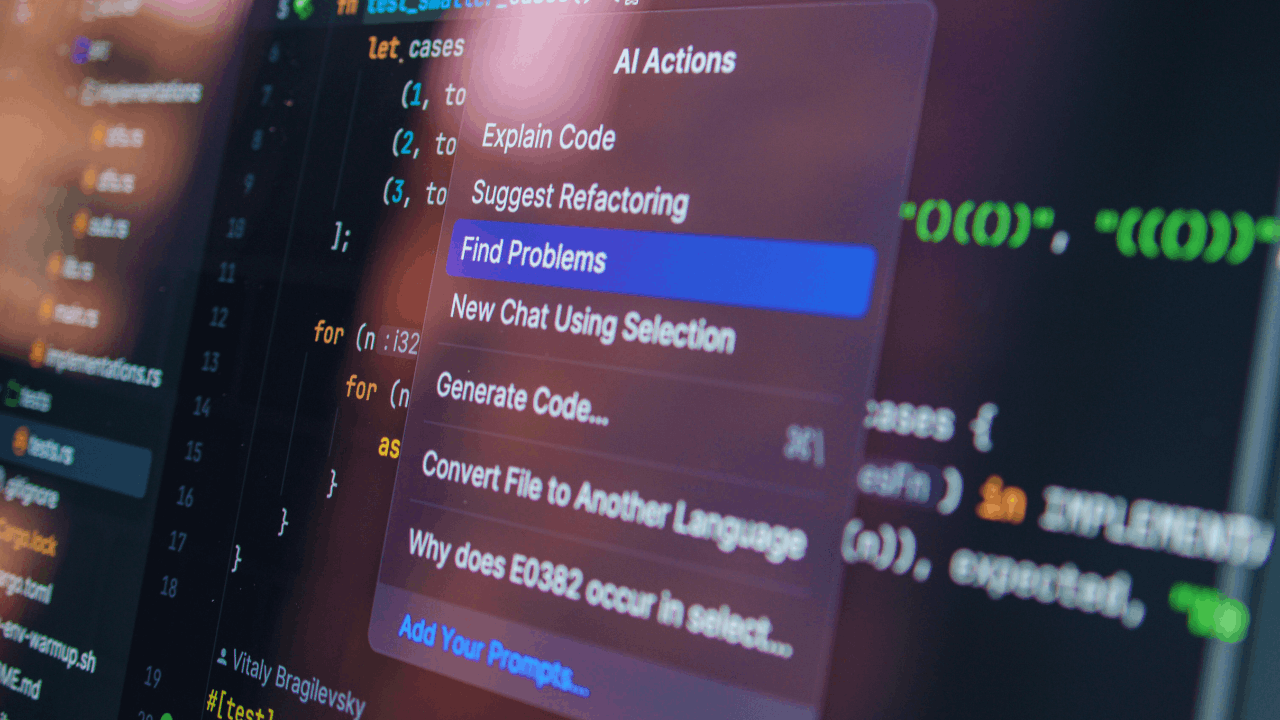

- The Observability MCP and AI Assistant let engineers explore and analyze error data in real time using natural language, without manual dashboard navigation.

Table of Contents

Streaming teams rely on observability data to understand how playback performs across devices, platforms, and networks. Error metrics sit at the heart of this, helping engineering teams catch problems that affect startup time, playback stability, and overall viewer experience. But acting on those signals is not always straightforward.

Many observability dashboards mix genuine playback failures with recoverable events, warnings, and transient conditions that don’t actually need attention. When that happens, teams end up investigating noise instead of real issues, and the metrics they rely on to measure quality become harder to trust. As streaming services expand across browsers, mobile devices, connected TVs, and Smart TVs, this problem grows, more platforms means more error variety, and more room for signals to get misread.

In this blog, we walk through the latest improvements in Bitmovin’s Observability that make playback errors easier to interpret and investigate.

The challenge of interpreting streaming error data

Observability data plays an important role in identifying playback issues across streaming platforms. Error metrics help engineering teams detect problems that may affect startup time, playback stability, or device compatibility. However, interpreting those signals can become difficult when different types of events appear together within error metrics. Recoverable conditions or informational signals may appear alongside real playback failures, making it harder to quickly determine which issues actually require investigation.

This complexity increases as streaming services support more browsers, mobile devices, and Smart TV platforms. Differences in operating systems, device models, and playback environments can influence how errors appear in observability data, making root cause analysis more challenging.

Common challenges when analyzing playback errors include:

- Distinguishing real playback failures from recoverable events

- Understanding how errors affect playback sessions

- Isolating platform, device, or operating system specific issues

- Accessing sufficient diagnostic information for root cause analysis

What’s new in Bitmovin’s Observability

The improvements introduced in Bitmovin’s Observability focus on providing clearer context around playback errors and how they appear in monitoring data. Instead of relying only on raw error counts, these updates help teams better understand the type of event that occurred, how it affects playback sessions, and where it appears across devices and platforms.

The updates focus on several areas of error analysis, including:

- Dynamic Error Collection with CRITICAL and INFO severity levels, keeping retry and unavoidable errors visible without polluting error metrics

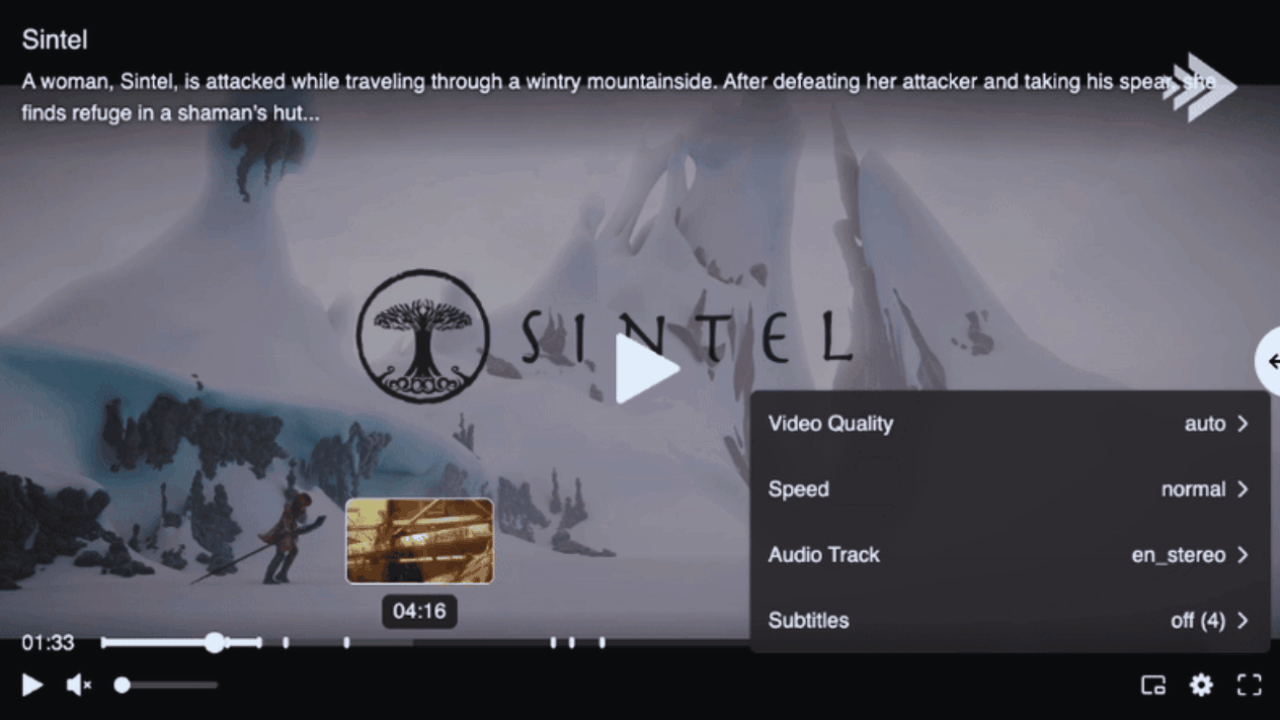

- Relative and absolute values for Error Sessions and Startup Failures

- Values for Error Sessions in the Top Error view

- OS major version as an additional filter and group-by dimension

- Expanded diagnostic data, including longer stack traces and additional ErrorDetails on iOS

- WebOS Smart TV model and OS version detection

- Percentage of Sessions with Errors (new metric), showing the percentage of sessions impacted by errors, available under the Error Sessions function in the dashboard.

These changes provide clearer signals during error analysis and help teams interpret observability data with greater confidence when investigating playback issues. These insights are visible within the observability dashboard, while Observability MCP and the AI Assistant allow engineers and other teams to query and explore the underlying error data in real time.

Improving error classification

Understanding the type of error that occurs during playback is an important step when diagnosing streaming issues. When multiple types of events appear together within error metrics, it becomes harder to determine which signals represent real playback failures and which ones reflect recoverable or informational conditions. Without clear classification, engineering teams may need to spend additional time reviewing error data before deciding which issues require investigation.

To address this, Bitmovin’s Observability introduces dynamic error collection with severity levels. Events can now be categorized as CRITICAL, representing real playback failures, or INFO, representing informational events that do not necessarily indicate a playback failure.

We have also improved how certain events are categorised in monitoring views: Quality Threshold Exceeded is now treated as a warning rather than an error, so your error metrics better reflect what is genuinely failing rather than mixing in performance-related warnings.

Understanding the impact of playback errors

Detecting an error during playback is only part of the debugging process. Teams also need to understand how that error affects viewing sessions and whether it represents a broader issue across the platform. Without additional context, raw error counts can be difficult to interpret, especially when multiple types of events appear in monitoring data.

To address this, Bitmovin’s Observability introduces improvements that provide clearer visibility into how errors affect playback sessions. These updates make it easier to understand both the scale of an issue and how it relates to overall playback activity.

These updates introduce additional context for analyzing playback errors, including:

- Percentage of Sessions with Errors, which shows what proportion of playback sessions during a given period were affected, giving a clearer sense of scale and urgency than raw error counts alone

- Relative and absolute values for Error Sessions and Startup Failures, so you can judge whether a spike is significant or within normal range

- Values for Error Sessions in the Top Error view, so that context carries through as you investigate deeper into specific errors

Debugging insights across devices and platforms

Error investigation often requires detailed diagnostic information and the ability to analyze issues across specific devices or operating systems. The latest updates expand the error data available during analysis and improve visibility across playback environments.

These capabilities include:

- Longer stack traces, now supporting up to 100 rows, providing a much fuller picture of how errors propagate through the playback stack

- Expanded ErrorDetails on iOS, providing additional context when investigating playback errors on Apple devices

- OS major version as a filter and group-by dimension, making it easier to isolate whether an issue is version-specific

- WebOS Smart TV model detection and WebOS OS version detection, improving visibility in Smart TV environments where device fragmentation can make debugging harder

Wrapping it up

As streaming services expand across more devices and platforms, interpreting error signals becomes increasingly important for maintaining reliable playback experiences. When error metrics lack context, teams may spend additional time determining which issues affect viewers and which events represent recoverable conditions.

Bitmovin’s Observability helps address this challenge by providing clearer error classification, better visibility into playback session impact, and richer diagnostic data for investigation. These insights are available through the observability dashboard, as well as through the Observability MCP and AI Assistant which make it easier for engineers and other teams to access and analyze this data in real time.

Try Bitmovin’s Observability for yourself and explore how these capabilities help teams analyze playback errors and investigate issues faster.

FAQs

What is Bitmovin’s Observability?

Bitmovin’s Observability is an analytics and monitoring platform for video streaming. It surfaces playback quality metrics — including errors, startup failures, buffering, and session data — to help engineering teams detect, investigate, and resolve issues affecting viewer experience across devices and platforms.

What is Dynamic Error Collection in Bitmovin’s Observability?

Dynamic Error Collection categorizes playback events by severity. CRITICAL events represent real playback failures that require investigation. INFO events are informational signals that are kept visible in the data but excluded from error metrics so they don’t inflate failure counts or mislead analysis.

What is the “Percentage of Sessions with Errors” metric?

It’s a new metric in Bitmovin’s Observability that shows what proportion of total playback sessions during a given period were affected by errors. Unlike raw error counts, this metric provides immediate context on scale and urgency, making it easier to judge whether a spike is a major incident or within normal range.

How does Bitmovin’s Observability help debug platform-specific streaming errors?

Several new capabilities support platform-level debugging: OS major version is now available as a filter and group-by dimension to isolate version-specific issues; stack traces now support up to 100 rows for a fuller view of error propagation; expanded ErrorDetails on iOS provide additional context for Apple device investigations; and WebOS Smart TV model and OS version detection improve visibility in Smart TV environments.

What is the Observability MCP and how does it help with error debugging?

The Observability MCP (Model Context Protocol) server connects AI-driven workflows to Bitmovin’s observability data. It allows teams to query playback error data in real time using natural language or automated workflows, without manually navigating the dashboard.

What is Bitmovin’s Observability AI Assistant?

The AI Assistant is a natural language interface built into Bitmovin’s Observability platform. It allows teams to ask questions about playback performance and error data directly, surfacing insights without requiring complex query construction or deep familiarity with the dashboard.