In software architecture, as in any problem-solving domain, it is important to solve the right problem in the right place.

A very well known example of this is the React Virtual DOM. Before this concept was introduced, web developers used to have to add, update and delete DOM nodes on their own. React removes this concern by simply collecting the sum of the outputs of all the components in the tree into memory, comparing the two trees (the virtual one and the real one) and updating the DOM with the differences. This is great for the programmer, as they no longer have to think about those updates, and can concentrate on their domain. However, it also allows React to decide when is the best time to apply the result to the browser DOM, allowing for global performance improvements across the application. Furthermore, because each and every component is pure, unit testing is trivial. And there are other benefits too, like being able to target a completely different platform than the DOM, like in React Native.

Another example is the Erlang VM, BEAM. Designed for scalability and resilience, this VM implements concurrency using the actor model. This is great for writing software that has to manage many parallel operations, once again saving the programmer from having to think about such things. But, due to the use of message passing, it also allows code to be scaled up transparently to an arbitrary number of cores or even machines without needing to change the codebase materially. Of course, as processes are independent, it is possible to let misbehaving processes crash without the overall system going down.

To put it another way, solving a problem in the right place has a compounding effect on the rest of the decisions that will be made while architecting software. This will lead to the domain logic being easier to express and understand, as the programmer no longer needs to think about orchestration concerns or side effects like DOM node manipulation.

Structured concurrency solves the right problem in the right place

In my previous article, I introduced structured concurrency and the benefits my team and I experienced when implementing it. I also mentioned the framework we built and how it performed exceptionally well when we created a player POC using the framework.

These results are an excellent example of why solving a problem in the right place is so powerful, just like React’s Virtual DOM or the BEAM actor model. By solving the problem of “how to synchronise execution” or “how to avoid race conditions and dangling errors” as a fundamental part of the framework, it becomes possible to abstract away the async problems and concentrate on solving the domain problem instead, like building a video player.

Async bugs are a plague on programs that are highly dynamic and data-heavy. At Bitmovin, we know a thing or two about this, as video players are very much of that type: it is necessary to wait for and synchronise multiple different asynchronous browser APIs (Fetch, the MSE API, etc) to stream video in a reliable way successfully.

Check out this pull request for instance. This fixes an async bug in a large, and in our considered opinion, very high-quality video player.

The bug in question is a classic case of a race condition; during seeking, the previous version of the code was sometimes calling a method on an object after the object itself was disposed of. This was leading to unhandled exceptions and a player crash that was difficult to reproduce.

I know from experience that a non-trivial amount of time is spent hunting for, understanding, and fixing this kind of issue, even though the fix itself is only a line or two of code. So, to avoid spending engineering time tracking down problems of this type, wouldn’t it be better if we were able to avoid issues of this type systemically? This is exactly what structured concurrency offers.

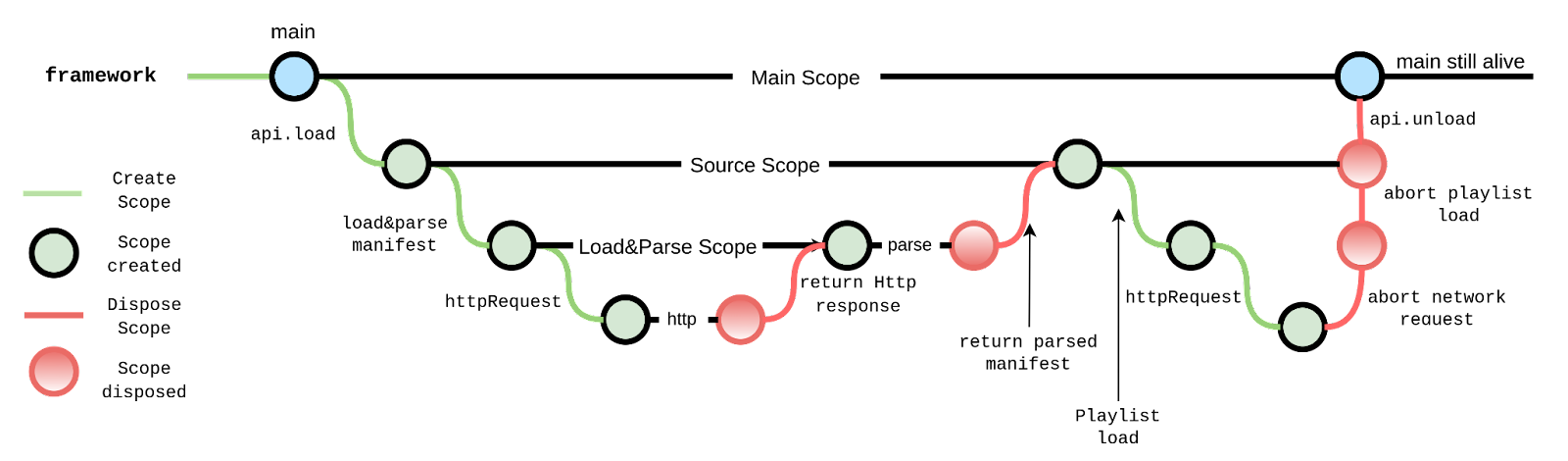

A concurrent hierarchy of scopes

The Typescript framework that we have been working on implements structured concurrency so that we no longer have to worry about these problems.

In our framework, units of code are run within a scope that is known to the framework. It is possible to start other units of execution from within a scope and that code will then be executed in another dependent scope. Should an error occur, the error is automatically propagated to the parent scope, which either handles the error or recursively cancels all concurrent scopes before propagating the error in turn to its parent.

It is a trivial operation to fork a new scope, and this, in turn, allows the framework to intercept execution to implement cancellation. In this way, a parent scope can also cancel the execution of children.

Lastly, each scope is kept alive until all child scopes have finished executing.

This leads to the formation of a hierarchy of scopes, where any subtree is completely self-contained, cancellable, and disposable in case of error. It is possible to catch errors at any level of the tree and handle them gracefully while ensuring that the concerned sub-tree will no longer be referenced in any way.

Ultimately, writing code using the framework is as simple as writing standard async Typescript. There is no longer a need for the programmer to worry about race conditions due to unexpected errors, and cancellation is also possible, to the extent permitted by Javascript’s event loop. The programmer is, therefore, responsible for avoiding long-running calculations, which is no different from standard practice in Javascript.

Structured concurrency and scope termination

Performance is a consequence of structured concurrency

Let’s return to the bug we examined earlier. In our new model, the focus would no longer be on objects but on scopes and lifetimes. There would be a seek scope that would fork the necessary sub-scopes. Due to the scopes forming a tree, it would essentially be impossible to access an object that no longer exists, as a scope only has access to the context of its parent. That parent scope is available until after the child has ended, so that bug would not be able to exist.

The reason I am sure of this is that in order to see our framework in action, we built a player POC that leverages the capabilities of our new framework. In this player seeking works precisely as described above.

One great side effect of the programming model was that it turned out to be quite simple to implement background source loading and blazingly fast source switching. The way that this works is, when the source is switched, we can simply cancel the manifest download pipeline and start a new one with the new URL. All dependent scopes, like segment downloads and manifest parsing, are simply and immediately cancelled. There is no need to synchronise objects, re-instantiate them, or reset data storage.

The effect of this is that, although the player is only a technology preview at this time, it performs above expectations. I will present some actual figures in my next post on the subject, but for now, it’s enough to say that it already performs incredibly well for metrics such as seeking, switching and even startup time. As explained above, I believe this is because we are not forced to manually orchestrate promises and callbacks, which means that efficiency is there by default.

Overall, I’m very excited about the potential of the framework due to the performance and the productivity my team has been able to achieve using it to build the player POC. In my next blog post, I will go into the benchmarking data I mentioned earlier and why the performance of our player POC has made it worthy of your attention.