Welcome to our comprehensive guide to MPEG-DASH in 2022. In this quick and informative article, Bitmovin CTO and Co-founder Christopher Mueller describes everything you need to know about MPEG-Dash (Dynamic Adaptive Streaming over HTTP).

Chapters:

- The History of MPEG-DASH?

- What is MPEG-DASH (in a Nutshell)?

- Media Presentation Description (MPD)

- Segment Referencing Schemes

- Conclusion and Further Reading

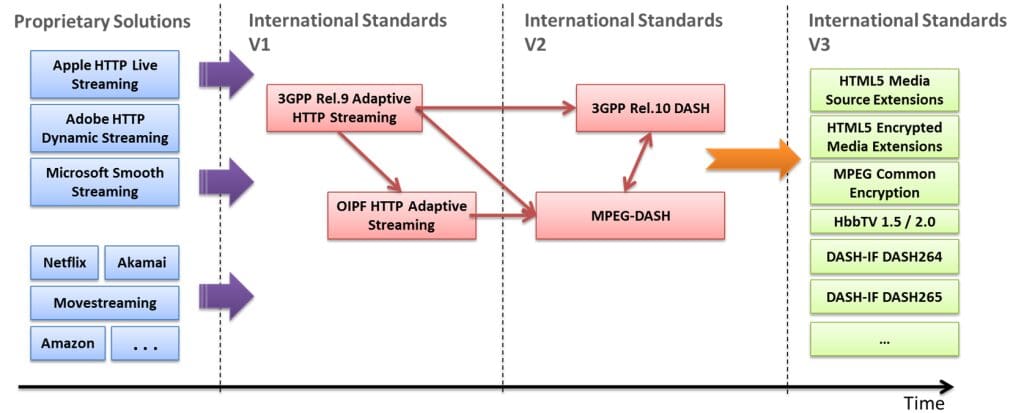

1: The History of MPEG-DASH (Dynamic Adaptive Streaming over HTTP, ISO/IEC 23009-1)

MPEG-DASH (Dynamic Adaptive Streaming over HTTP, ISO/IEC 23009-1) is a vendor-independent, international standard ratified by MPEG and ISO. Previous adaptive streaming technologies – such as Apple HLS, Microsoft Smooth Streaming, Adobe HDS, etc. – have been released by vendors with limited support of company-independent streaming servers as well as playback clients. As such a vendor-dependent situation is not desired, standardization bodies started a harmonization process, resulting in the ratification of MPEG-DASH back in 2012.

Key-Targets and Benefits of MPEG-DASH:

- reduction of startup delays and buffering/stalls during the video

- continued adaptation to the bandwidth situation of the client

- client-based streaming logic enabling the highest scalability and flexibility

- use of existing and cost-effective HTTP-based CDNs, proxies, caches

- efficient bypassing of NATs and Firewalls by the usage of HTTP

- common Encryption – signalling, delivery & utilization of multiple concurrent DRM schemes from the same file

- simple splicing and (targeted) ad insertion

- support for efficient trick mode

In recent years, MPEG-DASH has been integrated into new standardization efforts, e.g., the HTML5 Media Source Extensions (MSE) enabling the DASH playback via the HTML5 video and audio tag, as well as the HTML5 Encrypted Media Extensions (EME) enabling DRM-protected playback in web browsers. Furthermore, DRM-protection with MPEG-DASH is harmonized across different systems with the MPEG-CENC (Common Encryption) and MPEG-DASH playback on different SmartTV platforms is enabled via the integration in Hybrid broadcast broadband TV (HbbTV 1.5 and HbbTV 2.0). The usage of the MPEG-DASH standard has also been simplified by industry efforts around the DASH Industry Forum and their DASH-AVC/264 recommendations, as well as forward-looking approaches such as the DASH-HEVC/265 recommendation on the usage of H.265/HEVC within MPEG-DASH.

Here is a table showing MPEG-DASH Standards:

Today, MPEG-DASH is gaining more and more deployments, accelerated by VOD platforms such as Netflix or Google which have adopted this important standard. With these two major sources of internet traffic taken into account, 50 % of total internet traffic is already MPEG-DASH.

2: What is MPEG-DASH (in a Nutshell)?

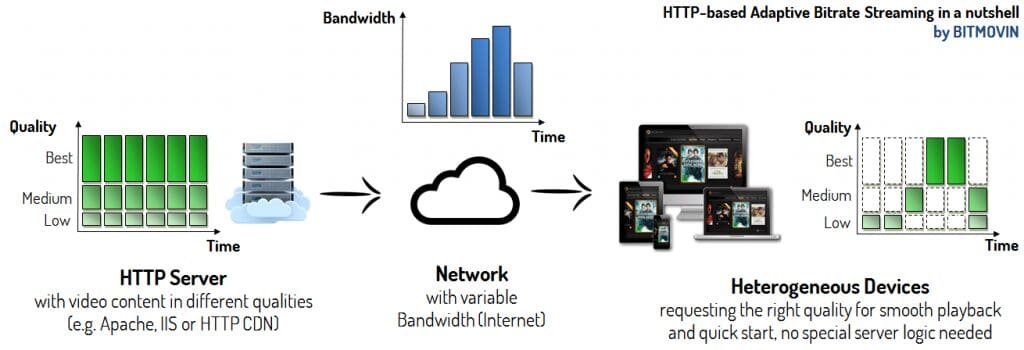

The basic idea of MPEG-DASH is this: Chop the media file into segments that can be encoded at different bitrates or spatial resolutions. The segments are provided on a web server and can be downloaded through HTTP standard-compliant GET requests (as shown in the figure below) where the HTTP Server serves three different qualities, i.e., Low, Medium, and Best, chopped into segments of equal length. The adaptation to the bitrate or resolution is done on the client-side for each segment, e.g., the client can switch to a higher bitrate – if bandwidth permits – on a per-segment basis. This has several advantages because the client knows its capabilities, received throughput, and the context of the user best.

Here’s an example of an MPEG-DASH workflow:

In order to describe the temporal and structural relationships between segments, MPEG-DASH introduced the so-called Media Presentation Description (MPD). The MPD is an XML file that represents the different qualities of the media content and the individual segments of each quality with HTTP Uniform Resource Locators (URLs). This structure provides the binding of the segments to the bitrate (resolution, etc.) among others (e.g., start time, duration of segments). As a consequence, each client will first request the MPD that contains the temporal and structural information for the media content, and based on that information it will request the individual segments that fit best for its requirements.

3: Media Presentation Description (MPD)

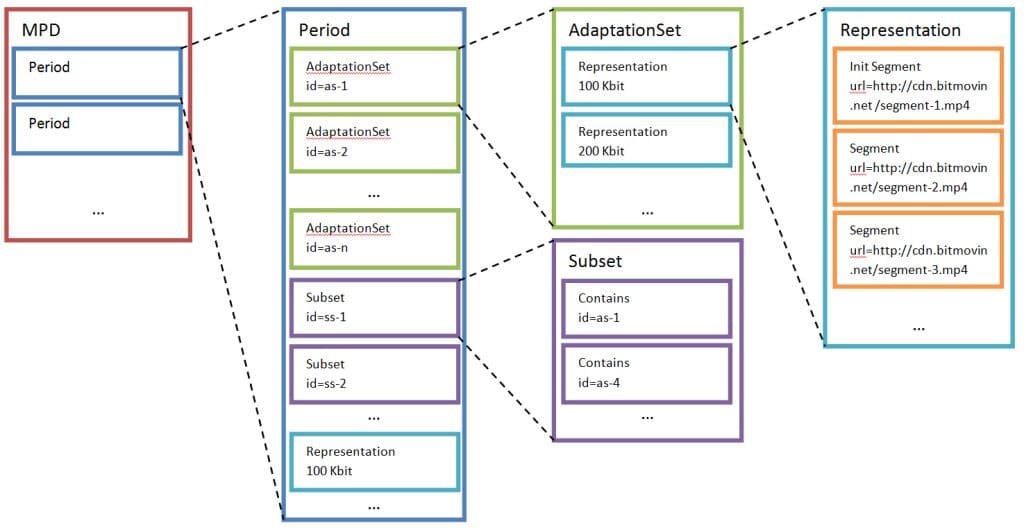

The MPEG-DASH Media Presentation Description (MPD) is a hierarchical data model. Each MPD could contain one or more Periods. Each of those Periods contains media components such as video components e.g., different view angles or with different codecs, audio components for different languages or with different types of information (e.g., with director’s comments, etc.), subtitle or caption components, etc. Those components have certain characteristics like the bitrate, frame rate, audio channels, etc. which do not change during one Period. Nevertheless, the client is able to adapt during a Period according to the available bitrates, resolutions, codecs, etc. that are available in a given Period.

Furthermore, a Period could separate the content, e.g., for ad insertion, changing the camera angle in a live football game, etc. For example, if an ad should only be available in high resolution while the content is available from standard definition to high definition, you would simply introduce your own Period for the ad which contains only the ad content in high definition.

After and before this Period, there are other Periods that contain the actual content (e.g., movie) in multiple bitrates and resolutions from standard to high definition.

What are AdaptationSets?

Typically, media components such as video, audio or subtitles/captions, etc. are arranged in AdaptationSets. Each Period can contain one or more AdaptationSets that enable the grouping of different multimedia components that logically belong together.

For example, components with the same codec, language, resolution, audio channel format (e.g., 5.1, stereo), etc. could be within the same AdaptationSet. This mechanism allows the client to eliminate a range of multimedia components that do not fulfill its requirements. A Period can also contain a Subset that enables the restriction of combinations of AdaptationSets and expresses the intention of the creator of the MPD. For example, allowing high definition content only with 5.1 audio channel format.

This graph shows an example of an MPD Model

An AdaptationSet consists of a set of Representations containing interchangeable versions of the respective content, such as different resolutions and bitrates, etc. Although one single Representation would be enough to provide a playable stream, multiple Representations give the client the possibility to adapt the media stream to its current network conditions and bandwidth requirements. This guarantees a smooth playback.

Of course, there are also further characteristics beyond the bandwidth describing the different representations and enabling adaptation. Representations may differ in the used codec, the decoding complexity and therefore the necessary CPU resources, or the rendering technology, just to name a few examples. Representations are chopped into Segments to enable the switching between individual Representations during playback. Those Segments are described by a URL and in certain cases by an additional byte range if those segments are stored in a bigger, continuous file.

The Segments in a Representation usually have the same length in terms of time and are arranged according to the media presentation timeline, which represents the timeline for the synchronization, enabling the smooth switching of Representations during playback. Segments could also have an availability time signalled as wall-clock time from which they are accessible for live streaming scenarios. In contrast to other systems, MPEG-DASH does not restrict the segment length or give advice on the optimal length. This can be chosen depending on the given scenario, e.g., longer Segments allow more efficient compression as Group of Pictures (GOP) could be longer or less network overhead, as each Segment will be requested through HTTP and with each request a certain amount of HTTP overhead is introduced. In contrast, shorter Segments are used for live scenarios as well as for highly variable bandwidth conditions like mobile networks, as they enable faster and flexible switching between individual bitrates.

Subsegments

Segments may also be subdivided into smaller Subsegments which represent a set of smaller access units in the given Segment. In this case, there is a Segment index available in the Segment describing the presentation time range and byte position of the Subsegments, which may be downloaded by the client in advance to generate the appropriate Subsegment requests using HTTP 1.1 byte range requests. During the playback of the content, arbitrary switching between the Representations is not possible at any point in the stream and certain constraints have to be considered. For example, Segments are not allowed to overlap, and dependencies between segments are also not allowed. To enable the switching between Representations, MPEG-DASH introduced Stream Access Points (SAP) on which this is possible. As an example, each Segment typically begins with an IDR-frame (in H.264/AVC) to be able to switch the Representation always after the transmission of one segment.

4: Segment Referencing Schemes

Segments are typically referenced through URLs as defined in RFC3986, using HTTP or HTTPS restricted possibly by a byte range. The byte range can be signaled through the attribute range and must be compliant with the RFC2616. Segments are part of a Representation, while elements like BaseURL, SegmentList, SegmentTemplate, and SegmentList can add additional information, such as location, availability, and further properties. Specifically, a representation should only contain one of the following options:

- one or more SegmentList elements

- one SegmentTemplate

- one or more BaseURL elements, at most one SegmentBase element and no SegmentTemplate or SegmentList element.

SegmentBase

SegmentBase is the most trivial way of referencing segments in the MPEG-DASH standard as it will be used when only one media segment is present per Representation, which will then be referenced through a URL in the BaseURL element. If a Representation should contain more segments, either SegmentList or SegmentTemplate must be used.

For example, Representation using SegmentBase could look like this:

<Representation mimeType="video/mp4"

frameRate="24"

bandwidth="1558322"

codecs="avc1.4d401f" width="1277" height="544">

<BaseURL>http://cdn.bitmovin.net/bbb/video-1500k.mp4</BaseURL>

<SegmentBase indexRange="0-834"/>

</Representation>

The Representation example above references one single segment through the BaseURL which is the 1500 kbps video quality of the corresponding content. The index of the quality is described by the SegmentBase attribute indexRange. This means that the information about Random Access Points (RAP) and other initialization information is contained in the first 834 bytes.

SegmentList

The SegmentList contains a list of SegmentURL elements which should be played back by the client in the order at which they occur in the MPD. A SegmentURL element contains a URL to a segment and possibly a byte range. Additionally, an index segment could occur at the beginning of the SegmentList.

Here is an example of Representation using SegmentList:

<Representation mimeType="video/mp4"

frameRate="24"

bandwidth="1558322"

codecs="avc1.4d401f" width="1277" height="544">

<SegmentList duration="10">

<Initialization sourceURL="http://cdn.bitmovin.net/bbb/video-1500/init.mp4"/>

<SegmentURL media="http://cdn.bitmovin.net/bbb/video-1500/segment-0.m4s"/>

<SegmentURL media="http://cdn.bitmovin.net/bbb/video-1500/segment-1.m4s"/>

<SegmentURL media="http://cdn.bitmovin.net/bbb/video-1500/segment-2.m4s"/>

<SegmentURL media="http://cdn.bitmovin.net/bbb/video-1500/segment-3.m4s"/>

<SegmentURL media="http://cdn.bitmovin.net/bbb/video-1500/segment-4.m4s"/>

</SegmentList>

</Representation>

SegmentTemplate

The SegmentTemplate element provides a mechanism to construct a list of segments from a given template. This means that specific identifiers will be substituted by dynamic values to create a list of segments. This has several advantages. For example, SegmentList based MPDs can become very large because each segment needs to be referenced individually. Compared with SegmentTemplate, this list could be described by a few lines that indicate how to build a large list of segments.

Here is a number based SegmentTemplate:

<Representation mimeType="video/mp4"

frameRate="24"

bandwidth="1558322"

codecs="avc1.4d401f" width="1277" height="544">

<SegmentTemplate media="http://cdn.bitmovin.net/bbb/video-1500/segment-$Number$.m4s"

initialization="http://cdn.bitmovin.net/bbb/video-1500/init.mp4"

startNumber="0"

timescale="24"

duration="48"/>

</Representation>

The example above shows the number-based SegmentTemplate mechanism. As you can see, instead of having multiple individual segment references through SegmentURL as shown in the SegmentList example, a SegmentTemplate can describe this use case in just a few lines. This is what makes the MPD more compact. This is especially useful for longer movies with multiple Representations where an MPD with SegmentList could have multiple megabytes. This would heavily increase the startup latency of a stream, as the client has to fetch the MPD before it could start with the actual streaming process.

Time-Based SegmentTemplate

The SegmentTemplate element could also contain a $Time$ identifier, which will be substituted with the value of the t attribute from the SegmentTimeline. The SegmentTimeline element provides an alternative to the duration attribute with additional features, such as:

- specifying arbitrary segment durations

- specifying exact segment durations

- specifying discontinuities in the media timeline

The SegmentTimeline also uses run-length compression, which is especially efficient when having a sequence of segments with the same duration. When SegmentTimline is used with SegmentTemplate then the following conditions must apply:

- at least one sidx box shall be present

- all values of the SegmentTimeline shall describe accurate

timing, equal to the information in the sidx box

Here’s an example of an MPD excerpt with a SegmentTemplate that is based on a SegmentTimeline

<Representation mimeType="video/mp4"

frameRate="24"

bandwidth="1558322"

codecs="avc1.4d401f" width="1277" height="544">

<SegmentTemplate media="http://cdn.bitmovin.net/bbb/video-1500/segment-$Time$.m4s"

initialization="http://cdn.bitmovin.net/bbb/video-1500/init.mp4"

timescale="24">

<SegmentTimeline>

<S t="0" d="48" r="5"/>

</SegmentTimeline>

</SegmentTemplate>

</Representation>

The resulting segment requests of the client would be as follows:

- http://cdn.bitmovin.net/bbb/video-1500/init.mp4

- http://cdn.bitmovin.net/bbb/video-1500/segment-0.m4s

- http://cdn.bitmovin.net/bbb/video-1500/segment-48.m4s

- http://cdn.bitmovin.net/bbb/video-1500/segment-96.m4s

- …

5: Conclusion and Further Reading

MPEG-DASH is a very broad standard and this is just a brief overview of some essential features and mechanisms.

We continue to write informative posts about the MPEG-DASH standard. In the meantime, you can try out MPEG-DASH on your own and encode content to MPEG-DASH through a Cloud-based Encoding Service.

More Readings:

- Multi-Cloud Video Encoding

- Encoding Definition and Adaptive Bitrates

- HTML5 Video Tag Guide

- HEVC vs VP9: The Battle of the Video Codecs

- Quality of Experience (QoE)

Did you know?

Bitmovin has a range of video streaming services that can help you deliver content to your customers effectively.

Its variety of features allows you to create content tailored to your specific audience, without the stress of setting everything up yourself. Built-in analytics also help you make technical decisions to deliver the optimal user experience.

Why not try Bitmovin for Free and see what it can do for you.

We hope you found this guide useful! If you did, please don’t be afraid to share it on your social networks!