Quality of Experience (QoE) in Video Technology [2022 Guide]

Welcome to our comprehensive guide to Quality of Experience in video technology.

If you’re looking for the following:

- Why QoE in Video Matters Today

- QoE and Revenue Loss Statistics

- Video Encoders and QoE

- Quality Assessment Scoring Methods

- Encoding Choices and QoE

Then you are in the right place.

Let’s get started!

Why Quality of Experience in Video Matters Today

Content owners are investing large sums of money on their premium content, so it is more important than ever they invest in maximizing the quality upon delivery as well.

Content needs to be prepared and streamed in impeccable quality in order to satisfy viewer expectations, and those expectations are getting increasingly demanding. Recent events have shown us that viewers are not shy to air their grievances when things don’t look or stream right.

From major sporting events to big-budget series, more of us expect to experience the fully immersive deep colors and vivid images promised by HDR and the 4k experience. When it isn’t forthcoming, users are quick to voice their displeasure.

Game of Thrones fans may recall the final season’s quality fiascos. Social media lit up with posts regarding the HBO streaming experience. Rather than mentions of epic battle scenes or plot twists, viewers took to social media to complain of difficult-to-see scenes, washed-out colors, and image artifacts — especially apparent on large television screens.

The key take-away: Great content is no longer enough to keep audiences engaged!

QoE and Revenue Loss Statistics

Industry studies show that as many as 33% of users leave a stream due to poor streaming quality. Verizon estimates that OTT video services delivering average or poor-quality experiences account for as much as 25% loss in revenue.

With the explosion of VOD platforms competing for our attention, it is vital that quality is not be a reason for your audience to churn or tune out.

So, what’s changed?

Only a decade ago, viewing high definition video online was a luxury, now high video quality has become a commodity and continues evolving.

High Definition and 4K resolutions are currently the industry standards (with 8K becoming more widespread).

In addition to high pixel count, the quality of pixels has consistently improved over the years as well. Today, pixels are comprised of more bits – which translates to more vibrant colors and details, enabling HDR technologies.

Audiences agree that these technologies offer a superior viewing experience over past media formats. However, implementing cutting-edge media technology is not always seamless.

Online streaming has proved to be one of the most challenging applications for new media formats.

Some of the challenges that content providers face include:

Lack of standardization

Device targeting with a given codec is necessary to optimize quality, and it becomes increasingly difficult with fragmented software & hardware codec support. Encoders must prepare content in a number of HDR formats (HLG for broadcast, HDR10 for streaming, Dolby Vision for streaming, etc) to reach viewers across devices.

Authentic 4K/8K experiences start with video production

Unless the entire upstream pipeline consists of a native 4K environment and downstream devices support 4K, image scaling is required.

Last-mile bandwidth limitations

Even with faster home and mobile networks and more efficient codecs, compressing 4K, 8K and HDR video into a size which can be efficiently streamed at the last mile is difficult without compromising quality.

Delivering a high-definition stream free of network interruptions (rebuffering) is no longer enough to satisfy viewers and subscribers. Today’s viewers expect immersive video experiences.

They want to take advantage of the rich features their screens and devices support — 4K, 8K, HDR, and next-generation audio.

Video Encoders Responsibility

Modern-day Adaptive Bitrate (ABR) video players are resilient to bandwidth fluctuations, but video encoders must be able to produce the clearest possible images at the most efficient bitrates to satisfy Quality of Experience (QoE) expectations.

Whether a talking head in a newsroom, an action-packed war scene, or a close-up of the game-winning goal, encoders must be flexible enough to maintain pristine quality in all content scenarios.

But exactly how much does quality matter and how should content owners identify and address quality problems?

Many still believe the key to high-quality video is higher bitrates.

That approach does not leverage the power of codecs or consider limited bandwidth audiences are faced with (nor is it cost-effective).

Either by increased delivery costs or quality-related churn; wrongly equating bitrate for quality will have a negative impact on the bottom line.

The most effective approach to optimizing encoding profiles will ensure visual perceptual quality is not compromised (improved QoE), bitrates are reduced for better delivery and availability performance (improved Quality of Service), and storage and delivery costs go down (improved economics).

(State-of-the-Art) Quality Assessment Scoring Methods

A viewer’s rating of quality is important for experience metrics, however, it’s not a reliable measurement of quality for production and distribution stakeholders such as service providers or network operators.

A standard consumer can provide valuable insight into subjective quality assessment, but subjective measurements often lack scientific objectivity and scalability.

For this reason we focus on repeatable and objective quality measurement methods.

Although there are many measurements, we have three identified primary methods “plus” an extension that are ideal for objective quality assessments:

Video Multi-Method Assessment Fusion (VMAF):

One of the latest metrics adapted by the streaming community is Netflix’s Video Multi-method Assessment Fusion (VMAF). It predicts subjective video quality based on reference and distorted video sequences.

The metric can be used to evaluate the quality of different video codecs, encoders, encoding settings, or transmission variants.

Structural Similarity (SSIM):

The structural similarity index is a method for predicting the perceived quality of digital television and cinematic pictures, as well as other kinds of digital images and videos.

As its name indicates: SSIM is used for measuring the similarity between two images.

The SSIM index is a full reference metric; in other words, the measurement or prediction of image quality is based on an initial uncompressed or distortion-free image as a reference.

SSIM is designed to improve on traditional methods such as peak signal-to-noise ratio (PSNR) and mean squared error (MSE).

SSIMPLUS:

The SSIMPLUS Viewer Score is a family of algorithms invented by the team that built SSIM, based on two decades of research & development after inventing SSIM, and is a commercially available tool.

SSIMPLUS measures video experience from content creation to consumption in an Apples-to-Apple fashion and assigns scores between 0–100, linearly matched to human subjective ratings.

SSIMPLUS also adapts assigned scores by intended viewing devices, viewer types, such as Studio, Expert, and Typical viewers; thereby comparing video across different resolutions, frame rates, dynamic ranges and contents.

According to its authors, SSIMPLUS achieves higher accuracy due to its ability to assess all commonly occurring impairments and higher speed than other image and video quality metrics. The Viewer Score is validated using publicly available and commonly used subject-rated datases. A visual comparison with other objective quality assessment approaches is available here.

Peak Signal-to-Noise Ratio (PSNR):

The Peak Signal-to-Noise Ratio is a video quality metric that combines human vision modeling with machine learning.

PSNR is most commonly used to measure the quality of reconstruction of lossy compression codecs (e.g., for image compression).

When comparing codecs, PSNR is an approximation of the human perception of reconstruction quality.

Generally speaking, a higher PSNR indicates that the reconstruction is higher quality, in some cases it may not.

However, in cases where the codecs and/or content are different, the validity of this metric can vary greatly; therefore, you should be extremely careful when comparing results.

PSNR is a well-established method especially in research and also within the industry when measuring at scale mainly given its simplicity.

But it is also known to not correlate well to human perception. Other metrics, such as SSIM or VMAF better correlate with subjective scores but can be quite expensive and time-consuming to compute.

In the end, it is up to an individual encoding professional to decide which method to use testing video quality.

There are additional quality metric scores such as MOS and DMOS, and the industry is still in the process of determining the gold-standard of objective quality measurement.

Encoding Choices

With objective quality assessment tools in hand, it is now easier for you and encoding professionals to evaluate and select which encoding technology will best suit your content delivery (and quality) needs.

So which technologies currently exist in the market and what are the ways that to encode (especially for quality)?

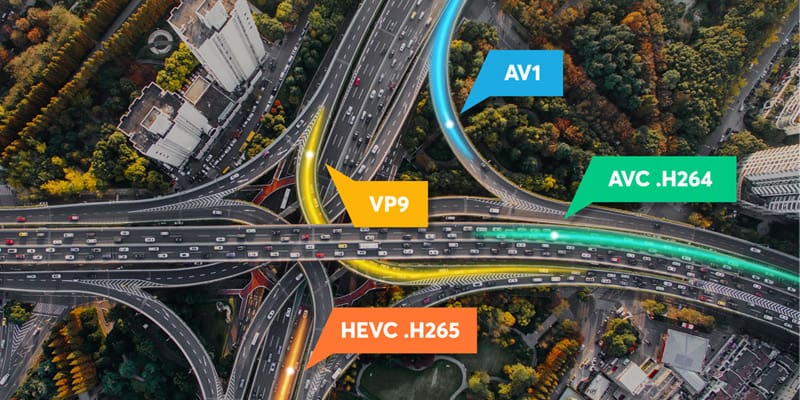

Codec Support Selection – it’s a multi-codec world!

Video Codecs:

H.264/AVC

The industry standard for video compression – designed and maintained by the Moving Pictures Expert Group (MPEG) since 2003. According to our 2019 Video Developer Report – is currently used by over 90% of video developers

H.265/HEVC

MPEG’s most recent video compression standard – designed and maintained since 2013 – offers 50% higher efficiency than its predecessor

VP9

Google’s royalty-free video compression standard – designed and maintained since 2013. Mostly used on YouTube, but otherwise does not offer full device reach. Great for saving on CDN/Bandwidth costs!

AV1

Another open-source code, designed by the Alliance for Open Media (AOMedia), a conglomerate of video tech giants like Google, Facebook, Netflix, Amazon, Windows, and Bitmovin.

AV1 offers 70% better compression rates than H.264 but is currently limited by application within browsers and devices. However, this is slated to change within the coming two years.

Next generation codecs:

VVC

Versatile Video Coding (VVC), is also called H.266, ISO/IEC 23090-3 and MPEG-I Part 3 is a video compression standard finalized on 6 July 2020 by the Joint Video Experts Team (JVET) of the VCEG working group of ITU-T Study Group 16 and the MPEG working group of ISO/IEC JTC 1/SC 29.

EVC

MPEG-5 Essential Video Coding (EVC) is a current video compression standard that was completed in April 2020 by decision of MPEG Working Group 11 at its 130th meeting. The standard is to consist of a royalty-free subset and individually switchable enhancements.

LCEVC

Low Complexity Enhancement Video Coding (LCEVC) is a ISO/IEC video coding standard developed by the Moving Picture Experts Group (MPEG) under the project name MPEG-5 Part 2 LCEVC

Benefits of single codec usage vs Multi-codec support

Even though H.264 is ubiquitous and widely supported at the hardware level, it is much less efficient than next-generation codecs in terms of compression rate.

By encoding your videos using a multi-codec approach you can aim to double the quality while still reducing your bandwidth consumption without compromising device reach.

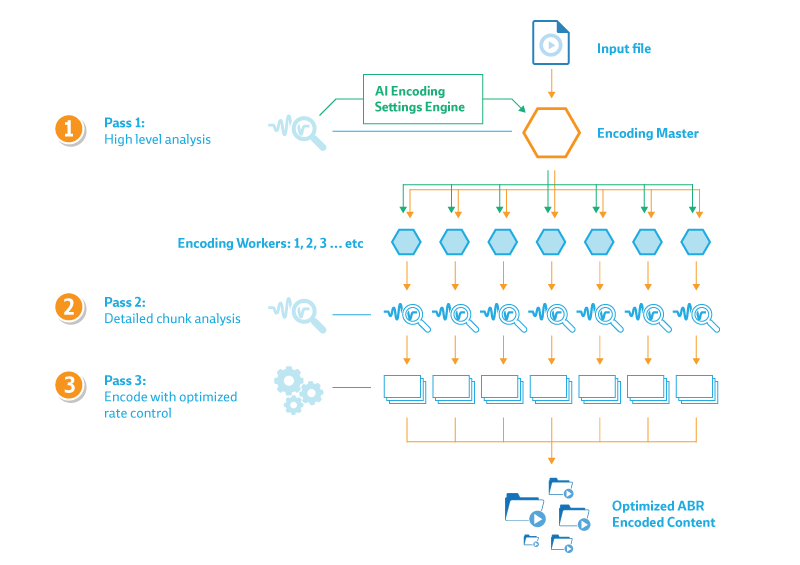

This graphic shows you how to perform an encode

Now that you’ve selected which codecs you’ll be using, the next step is to determine how you’ll encode your video content. To retain the best quality during conversion, we’ve determined that multi-pass encoding is the best option; below you’ll find the types of multi-pass encodes:

2-Pass Encoding

A file is analyzed thoroughly in the first pass and an intermediate file is created. In the second pass, the encoder looks up the intermediate file and appropriately allocates bits, therefore, the actual encoding takes place during the second pass.

3-Pass Encoding

Similar to 2-Pass encoding, 3-Pass encoding analyzes the video three times from the beginning to end before the encoding process begins. While scanning the file, the encoder writes information about the original video to its own log file and uses that log to determine the best possible way to fit the video within the bitrate limits the user has set for the encoding process.

Per-Title Encoding

A form of encoding optimization that customizes the bitrate ladder of each video based on the complexity of the video file. The ultimate goal is to optimize towards a bitrate that provides just enough room for the codec to encapsulate information to present a perfect viewing experience.

Another way to consider it, is that the optimized adaptive package is reduced down to contain the exact information for optimal viewing quality. Anything beyond the human eye’s ability to perceive is stripped out.

Some of the true magic behind encoders is their ability to choose how to implement or tune a given codec.

In some cases, encoders allow users to configure and optimize codec compression settings, like motion estimation or GOP size and structure.

It goes without saying, but the best method to ensure top quality, even through an encode, is by supplying high-quality sources; starting with pristine quality videos and using best practices for signal acquisition/contribution.

More Readings:

- What is DRM and How does it work?

- Video Technology Trends

- Multi-Cloud Video Encoding

- Encoding Definition and Adaptive Bitrates

- HTML5 Video Tag Guide

- VOD Platforms

- HEVC vs VP9: The Battle of the Video Codecs

Follow Bitmovin on Twitter: @bitmovin

Did you know?

Bitmovin has a range of video streaming services that can help you deliver content to your customers effectively.

Its variety of features allows you to create content tailored to your specific audience, without the stress of setting everything up yourself. Built-in analytics also help you make technical decisions to deliver the optimal user experience.

Why not try Bitmovin for Free and see what it can do for you.

We hope you found this guide useful! If you did, please don’t be afraid to share it on your social networks!