VOD ENCODING

Per-Title Encoding

Efficiently encode video, maintaining the highest visual quality with a customized adaptive bitrate ladder.

Benefits

Simplified workflows

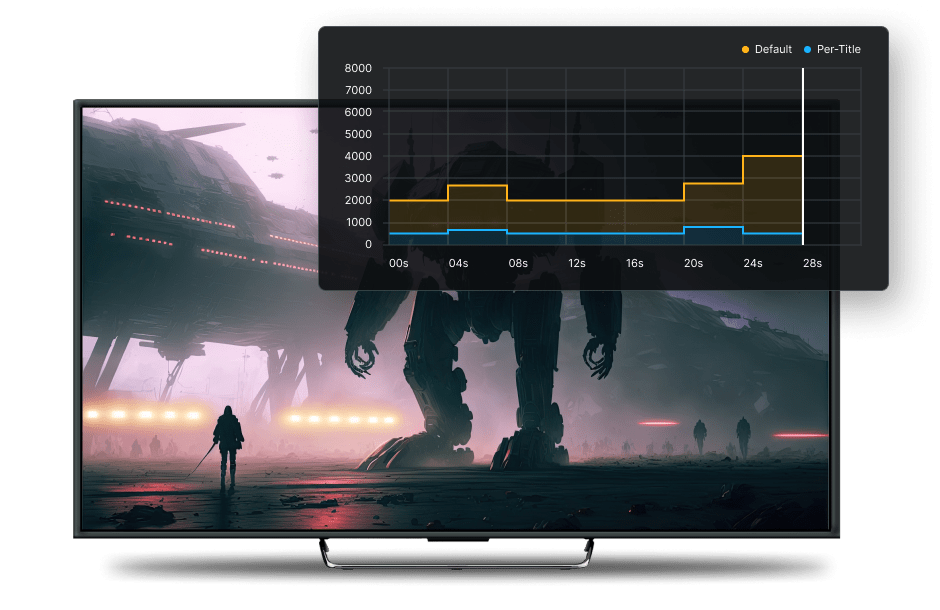

Automatically analyze the complexity of every file and create the ideal adaptive bitrate (ABR) ladder to maximize quality of experience for each video.

Improved QoE

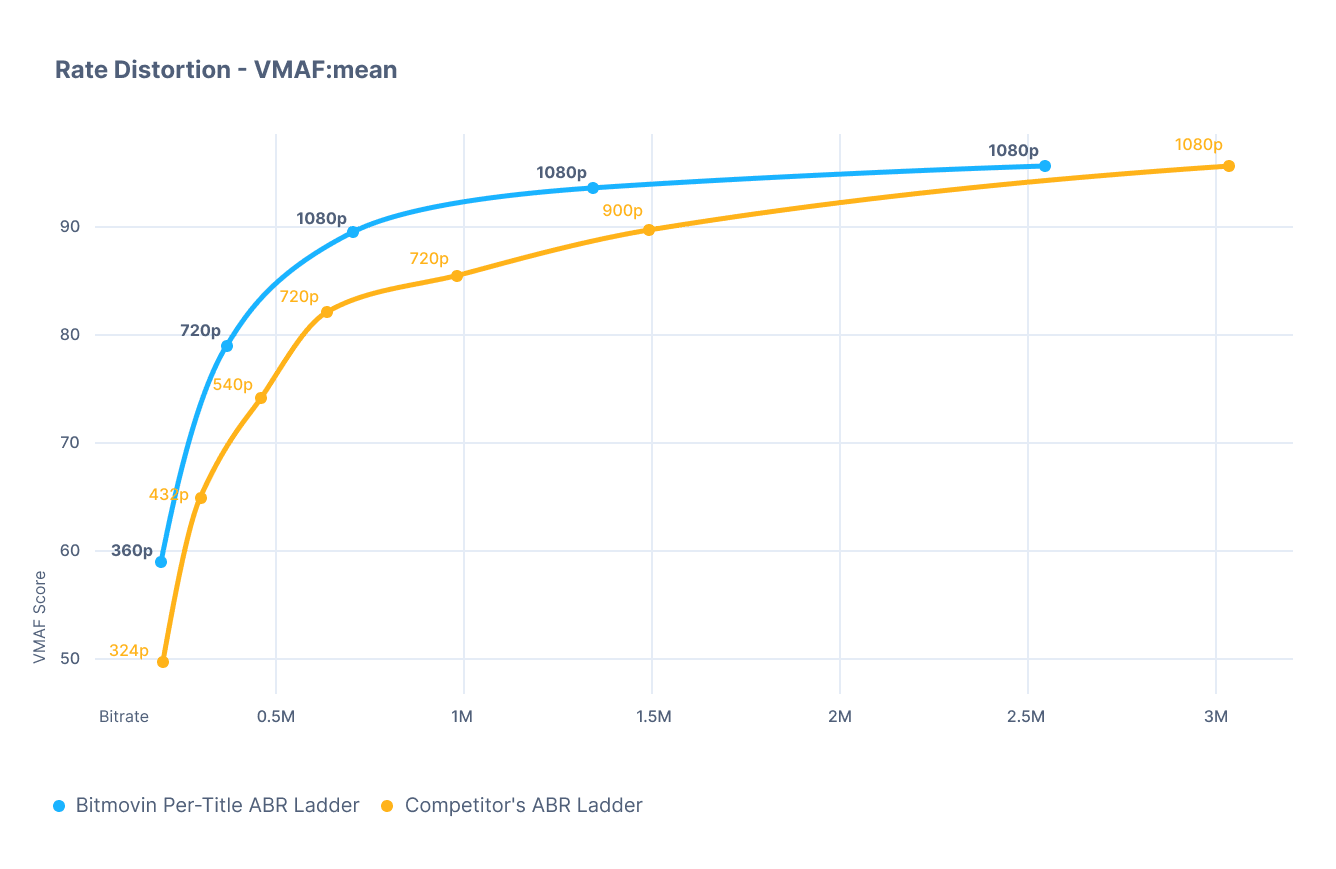

Create higher quality video at up to 70% lower bitrates than the competition at the same resolution, making your HD and 4K content available to a wider audience.

Reduced costs

Decrease your storage footprint and CDN egress costs by up to 80% without sacrificing quality by eliminating unnecessary variants from your ABR ladder.

Use Cases

Efficiently handle content with varying complexity

Avoid a one-size-fits-all approach to encoding; don’t be left sacrificing quality for some of your videos and wasting data on others.

Explore new codecs and workflows

Skip the tedious benchmarking and trial and error phase that comes with adding a new codec and optimizing its settings.

The industry’s most flexible

Per-Title Encoding solution

- Configured for both AVOD and SVOD use cases

- Pristine premium content without breaking the bank

- Control storage and delivery costs without impact on visual quality

- No configuration required, available in a single request with Simple Encoding API

- Highly customizable – configure upper and lower bitrate bounds, define ABR ladder rungs and take advantage of multi-codec support

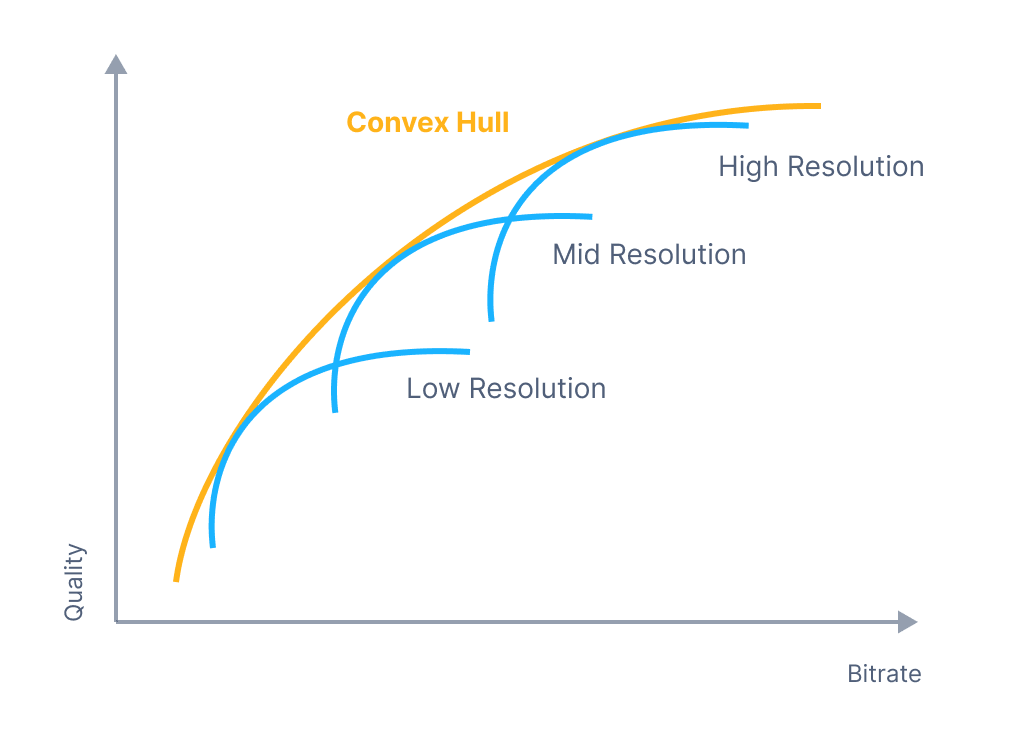

Best-in-class ABR ladder integrity means best QoE for viewers

- For every resolution of a given video, there is a bitrate where visual quality reaches its peak and when plotted on a graph, the points where quality is maximized form a “convex hull”.

- Bitmovin’s Per-Title Encoding beats the competition when it comes to ABR ladder integrity, meaning fewer visual quality drops, less noticeable switches between variants and an overall better quality of experience for viewers.

Frequently asked questions

What is Per-Title Encoding?

Per-Title Encoding is a video encoding technique that customizes encoding settings for each individual video to optimize visual quality without wasting overhead data.

How does Per-Title Encoding work?

Per-Title Encoding analyzes the complexity of a video file and determines the encoding parameters needed to maintain the highest level of visual quality together with the most efficient adaptive bitrate ladder.

What are the benefits of Per-Title Encoding?

Per-Title Encoding can increase visual quality while using the same amount of data when compared to traditional encoding techniques. Per-Title Encoding can also be used to lower bitrates, providing storage and delivery cost savings, while maintaining the same visual quality as traditional encoding.

What types of content benefit most from Per-Title Encoding?

HD and higher resolution content (especially 4K and 360) and longer form content with varying complexity will benefit the most from Per-Title Encoding. For short-form content like ads or time-sensitive workflows, the added time for complexity analysis may outweigh the benefits.

Which codecs are supported with Per-Title Encoding?

Bitmovin’s Per-Title Encoding can be used with the following codecs: H.264/AVC, H.265/HEVC, VP9 and AV1

WHITEPAPER

Choosing a Per-Title

Encoding Technology

Streaming and encoding expert Jan Ozer evaluated several Per-Title encoding technologies, providing his results and analysis in this report. Read more to learn why he chose Bitmovin as the best solution.

“What was particularly impressive was that Bitmovin performed well with all types of videos…” – Jan Ozer

Get started easily with our VOD Encoding Wizard and get access to 2000 free encoding minutes monthly

Built by and for developers

Developer resources to get started quickly, APIs & documentation to integrate easily.

final var startEncodingRequest = new StartEncodingRequest(); final var dashManifestResource = new ManifestResource(); dashManifestResource.setManifestId(dashManifest.getId()); startEncodingRequest.setVodDashManifests(List.of(dashManifestResource)); final var hlsManifestResource = new ManifestResource(); hlsManifestResource.setManifestId(hlsManifest.getId()); startEncodingRequest.setVodHlsManifests(List.of(hlsManifestResource)); startEncodingRequest.setManifestGenerator(ManifestGenerator.V2); bitmovinApi.encoding.encodings.start(encoding.getId(), startEncodingRequest);

MORE RESOURCES

Simplifying encoding workflows through our innovation

Learn about the latest features built to encode your files in the best quality with the quickest turn-around times.

Cloud Connect

Bitmovin dynamically scales worker nodes in your cloud environment on AWS, Azure or GCP. Reduce costs and use VOD encoding to satisfy your committed contracts.

Cloud Scalability

Scale with efficiency and sustainability in mind with our cloud-native encoding service. Adapt to changing demand with no idle infrastructure and no fixed cost.

Multi-pass Encoding

Ensure crystal-clear quality and maximize video processing efficiency through the Bitmovin VOD Encoder’s multi-pass encoding capabilities.

Split and Stitch

Unlock the quickest asset turnaround times and unrivaled video compression efficiency with Bitmovin’s split and stitch technology, revolutionizing how companies deliver high-definition content seamlessly.

AV1

The next generation video codec that can deliver stunning 4K video over connections that are limited to SD with older codecs.

Partnerships

The right partners

for every workflow

The Bitmovin VOD Encoder is integrated with trusted industry partners, providing seamless integration for Digital Rights Management (DRM) and Forensic Watermarking to Server-Side Ad Insertion and CDNs.

With this collaborative approach, Bitmovin empowers development teams to get to market faster, helping keep their content secure, maximizing ROI and streaming worldwide.

Related content

Case study

BILD

Transforming global news delivery with lightning-fast encoding, streaming, and top-notch quality

Case study

Globo

Delivering top-quality content without compromising bandwidth with Bitmovin’s 3-pass encoding

Case study

Easel TV

Leveraging Bitmovin, Easel TV reduced latency by 94% and sped up content delivery by 93%

Case study

NRL

Enhancing the National Rugby Leagues video workflow to boost efficiency and resolve issues

Ready to deliver stunning video with our encoder?

Start encoding today by signing up for a free trial!