In the first part of this blog post we provided a rough overview of QUIC and HTTP/2 and how it can be used for DASH. In the second part we will provide details about the actual evaluation.

Evaluation Setup

The test content for the evaluation is Big Buck Bunny which has been encoded and segmented into 14 representations using x264 and MP4Box, respectively. The segment size is 2 seconds and the bitrate varies from 100 to 4,500 kbps as follows: 100, 200, 350, 500, 700, 900, 1100, 1300, 1600, 1900, 2300, 2800, 3400, and 4500. We use a constant frame rate of 30 fps and constant resolution of 640×360 pixels as we are mainly focusing on changes in the actual bitrate. Therefore, the resolution is not that important.

The test environment comprises a sever component (hosting HTTP and QUIC servers) and the DASH client connected through a network emulator responsible for bandwidth shaping (token bucket filter and traffic control program) and network delay emulation (netem program). The server hosts a standard Apache Web server (v2.4.7) with nghttp2 proxy for the HTTP/2.0 delivery. Moreover, it runs the QUIC prototype server, which supports versions 15 to 19 of the QUIC protocol, to deliver media content using HTTP/1.1 directly over QUIC or using SPDY over QUIC. The DASH client is based on the QTSamplePlayer that comes with libdash which has been enhanced with SPDY/HTTP/2.0/QUIC capabilities and a simple adaptation logic referred to DASH-JS.

For each subsequent evaluation, five runs have been conducted and the mean value is presented. Note that differences between individual runs are so marginal that we refrain from showing confidence intervals.

Protocol Overhead

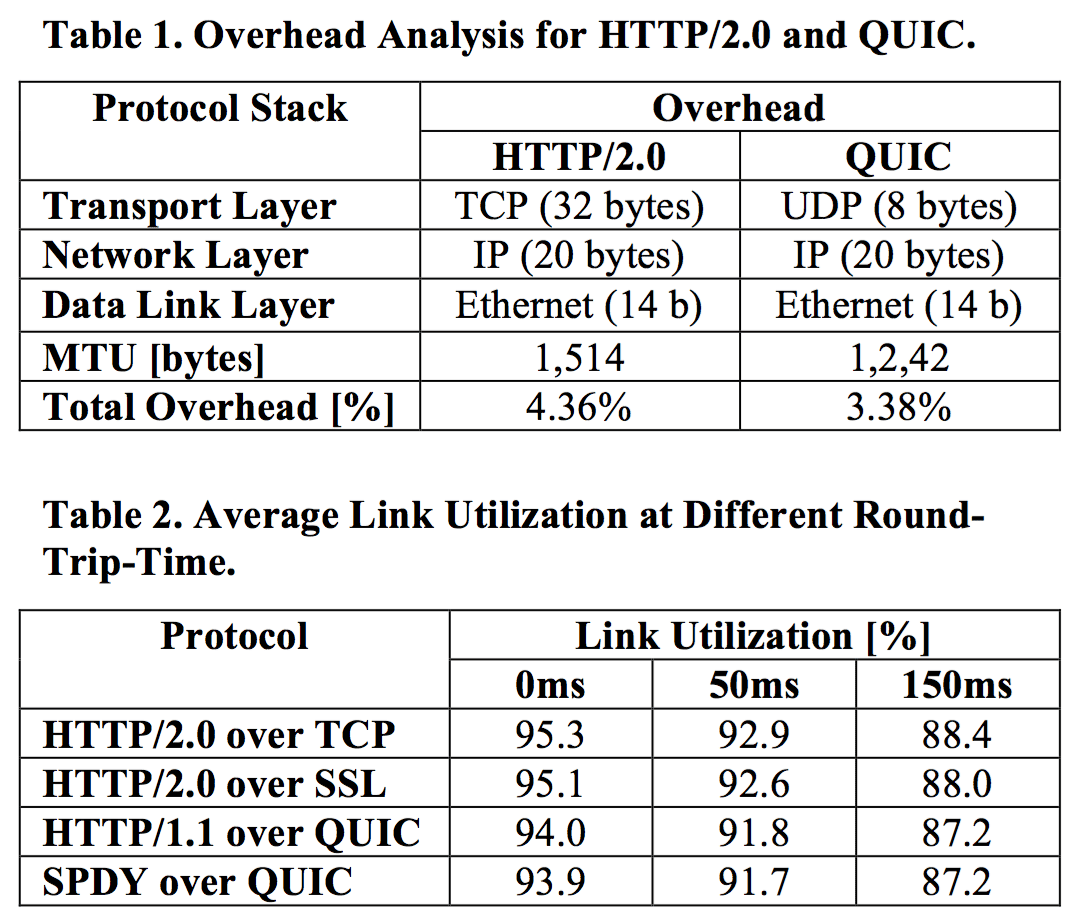

The protocol overhead is first computed based on the underlying specifications and summarized in Table 1. HTTP is based on TCP, which introduces an overhead of 20 bytes for the TCP header and additional 12 bytes for the optional header fields. QUIC is based on UDP, which introduces an overhead of 8 bytes. The remaining overhead is the same for both approaches, e.g., using 20 bytes for the IP header and additional 14 bytes for the Ethernet at the link layer. HTTP/2.0 and QUIC adopt an additional framing layer above TCP and UPD. For HTTP/2.0, each frame has an 8-byte header to carry the length, stream identifier, type and corresponding flags. For QUIC, the frame header does not have a fixed length but varies between 2 and 19 bytes.

The protocol overhead is first computed based on the underlying specifications and summarized in Table 1. HTTP is based on TCP, which introduces an overhead of 20 bytes for the TCP header and additional 12 bytes for the optional header fields. QUIC is based on UDP, which introduces an overhead of 8 bytes. The remaining overhead is the same for both approaches, e.g., using 20 bytes for the IP header and additional 14 bytes for the Ethernet at the link layer. HTTP/2.0 and QUIC adopt an additional framing layer above TCP and UPD. For HTTP/2.0, each frame has an 8-byte header to carry the length, stream identifier, type and corresponding flags. For QUIC, the frame header does not have a fixed length but varies between 2 and 19 bytes.

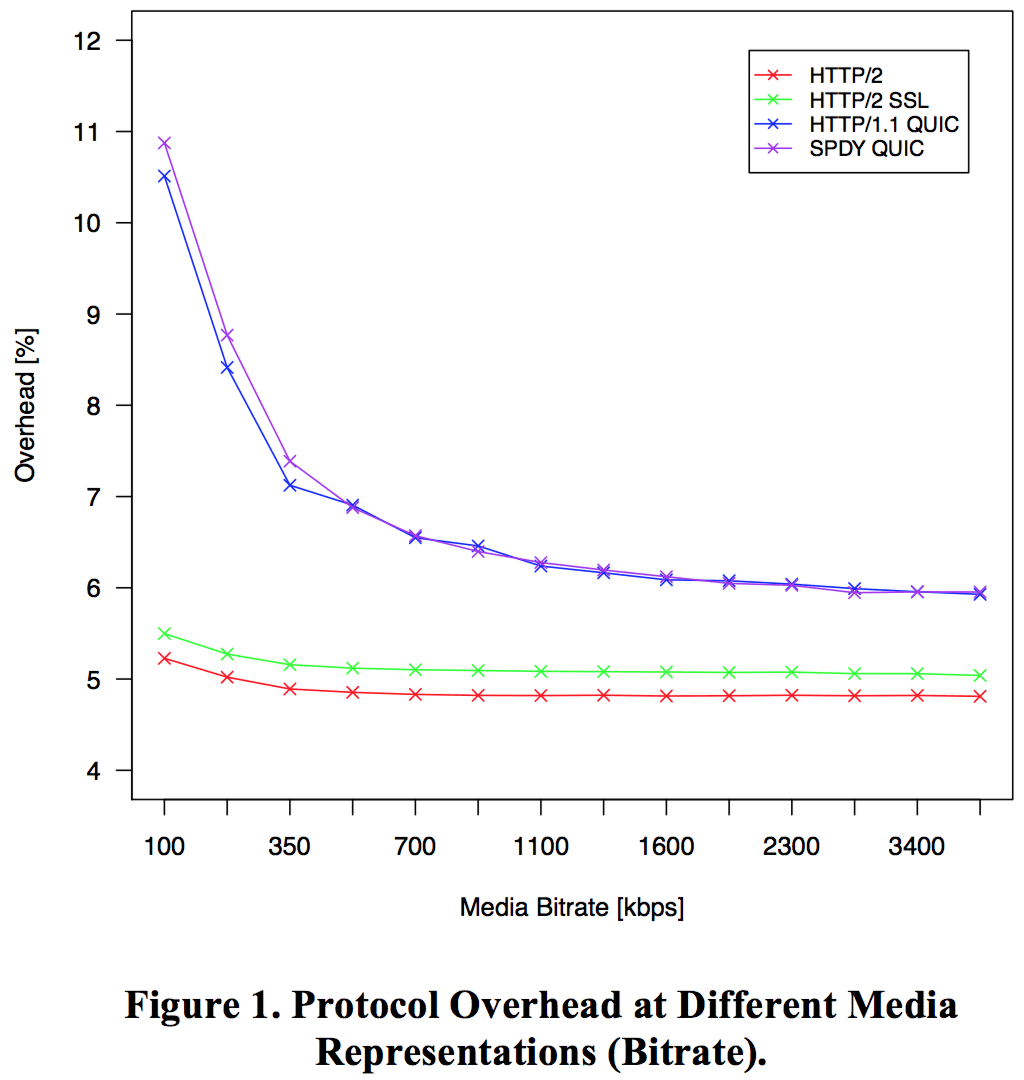

The actual protocol overhead is finally measured for the 14 different representations in a DASH scenario as depicted in Figure 1. The horizontal axis shows the quality level of the encoded representation while the vertical axis shows the protocol overhead in percentage. In general, the overhead is below 10% except for QUIC and very low bitrates at 100 kbps. However, QUIC always comes with a higher overhead than HTTP/2.0 over TCP. This result is counter-intuitive since QUIC is running over UDP, which has a slightly lower protocol overhead than TCP. Keep in mind that QUIC provides a multiplexed stream protocol on top of UDP and security comparable with SSL. Comparing the solutions providing encryption, the average overhead of QUIC is about 1.65% higher than HTTP/2.0 over SSL.

Link Utilization

Link Utilization

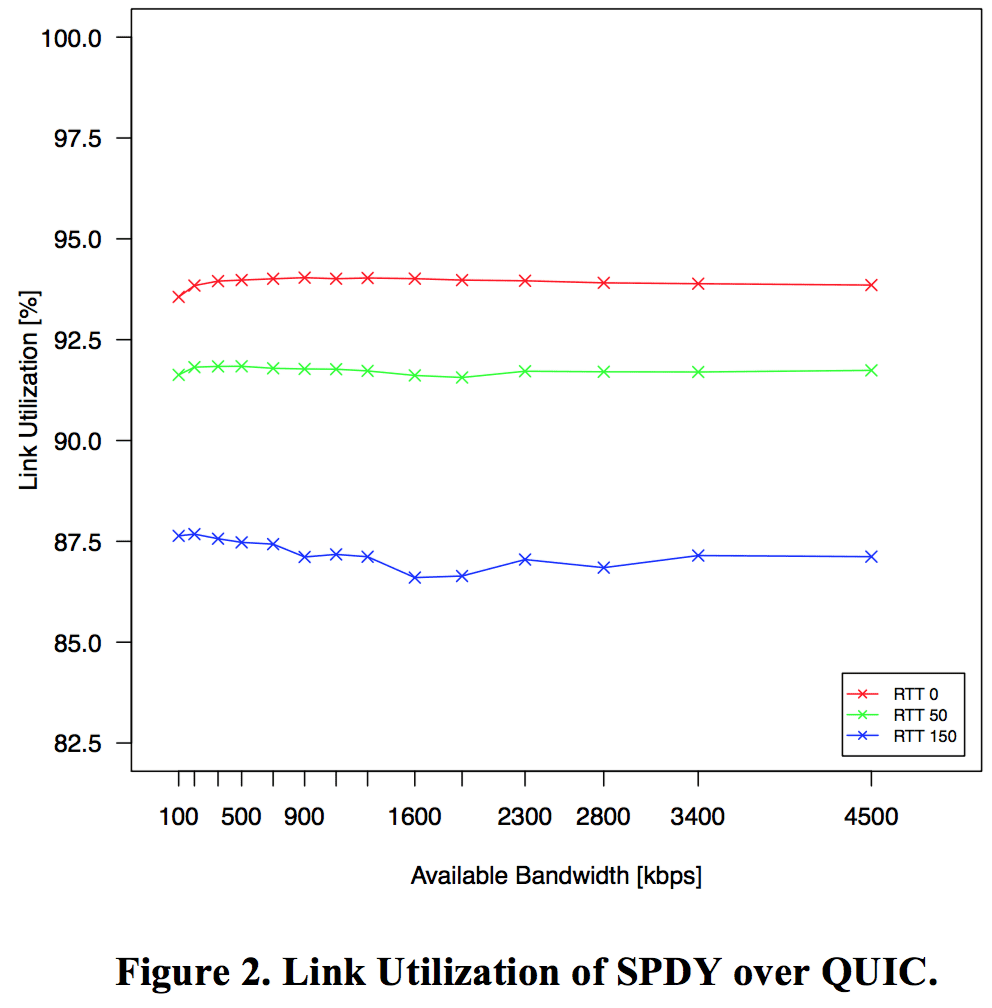

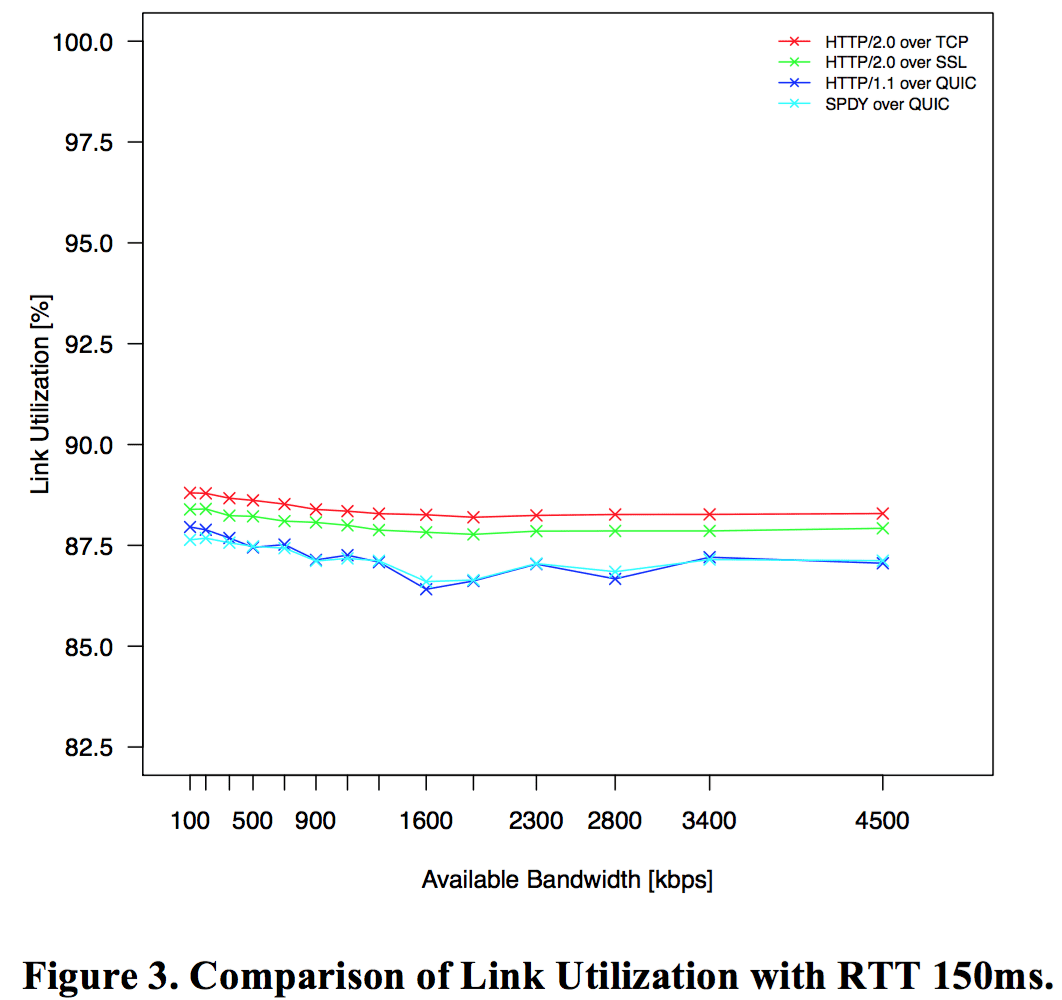

The link utilization has been tested with all representations using different round trip times (RTTs) of 0ms (local area networks), 50ms (fixed line/wired network), and 150ms (wireless/mobile network). The actual link utilization is calculated as a ratio of the effective throughput and the available bandwidth. For each individual run, the bandwidth is restricted to the bitrate of the corresponding representation. As expected, the higher the RTT, the lower the link utilization but in all cases it is >80% as shown in Table 2.

Figure 2 shows the link utilization of SPDY over QUIC for the different RTTs and the given available bandwidth. The results are stable over the bandwidth and similar for the other protocol combinations. A comparison of the link utilization with RTT 150ms is depicted in Figure 3. The comparisons of the other RTTs look similar but with a higher link utilization according to the values shown in Table 2. Interestingly, the link utilization is not as stable when using QUIC compared to TCP/SSL configurations. However, QUIC is becoming more stable with decreased RTT (not shown here).

Adaptation Performance

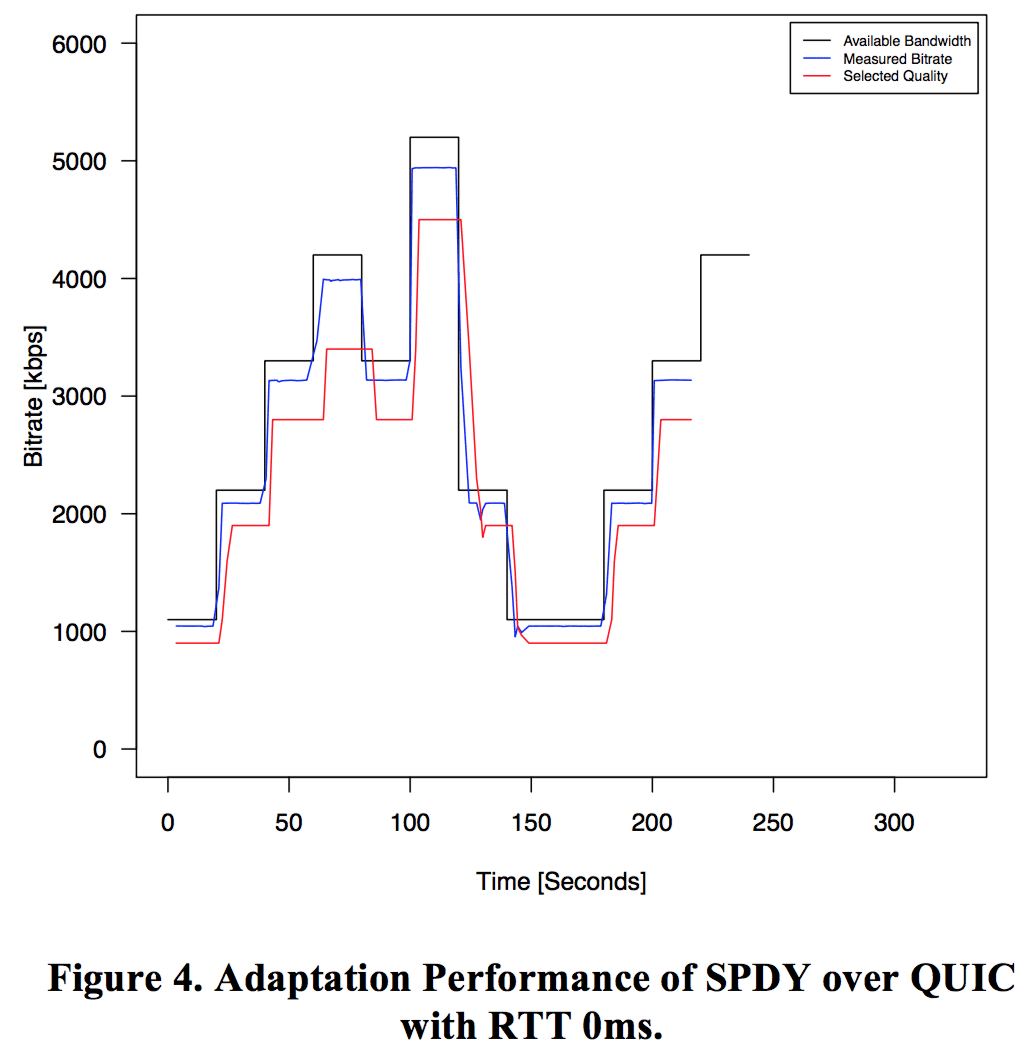

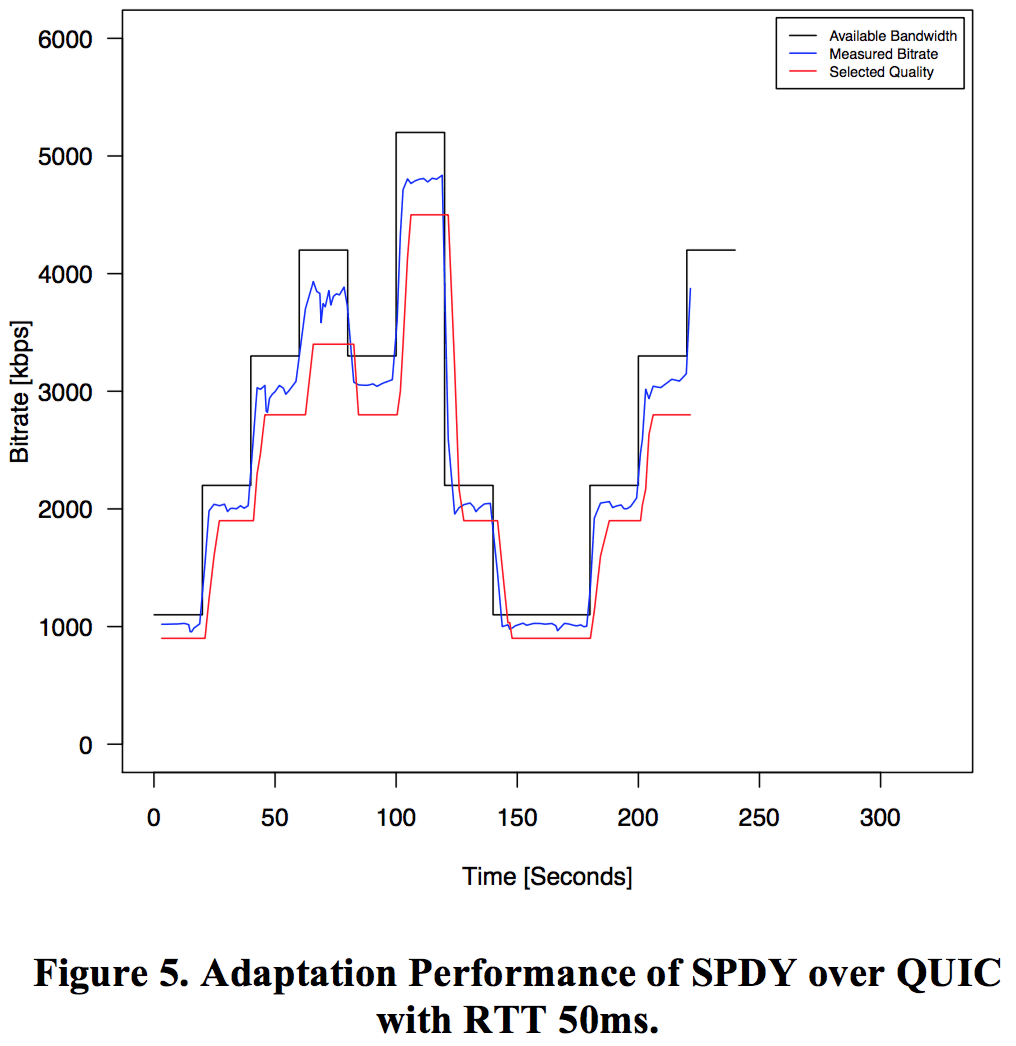

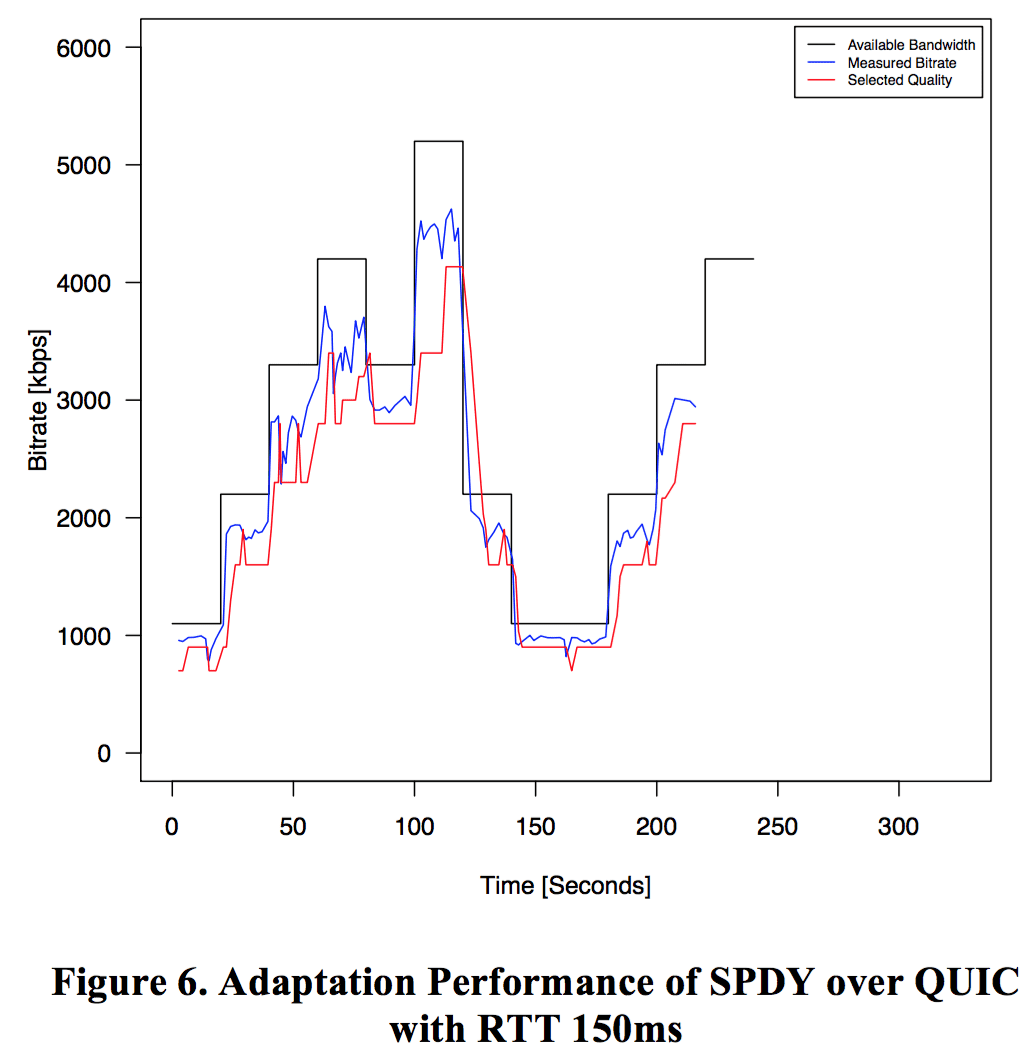

The adaptation performance is evaluated for a given bandwidth trajectory limiting the available bandwidth between 1-5 Mbps for the different protocol combinations. Additionally, the same RTTs as for the link utilization have been used. The results reveal that the adaptation performance – average media throughput – is very similar for the different protocol combinations (>2 Mbps in all cases) and, thus, we focus on SPDY over QUIC for different RTTs. For the actual adaptation logic we adopt DASH-JS which is based on a simple bandwidth estimation.

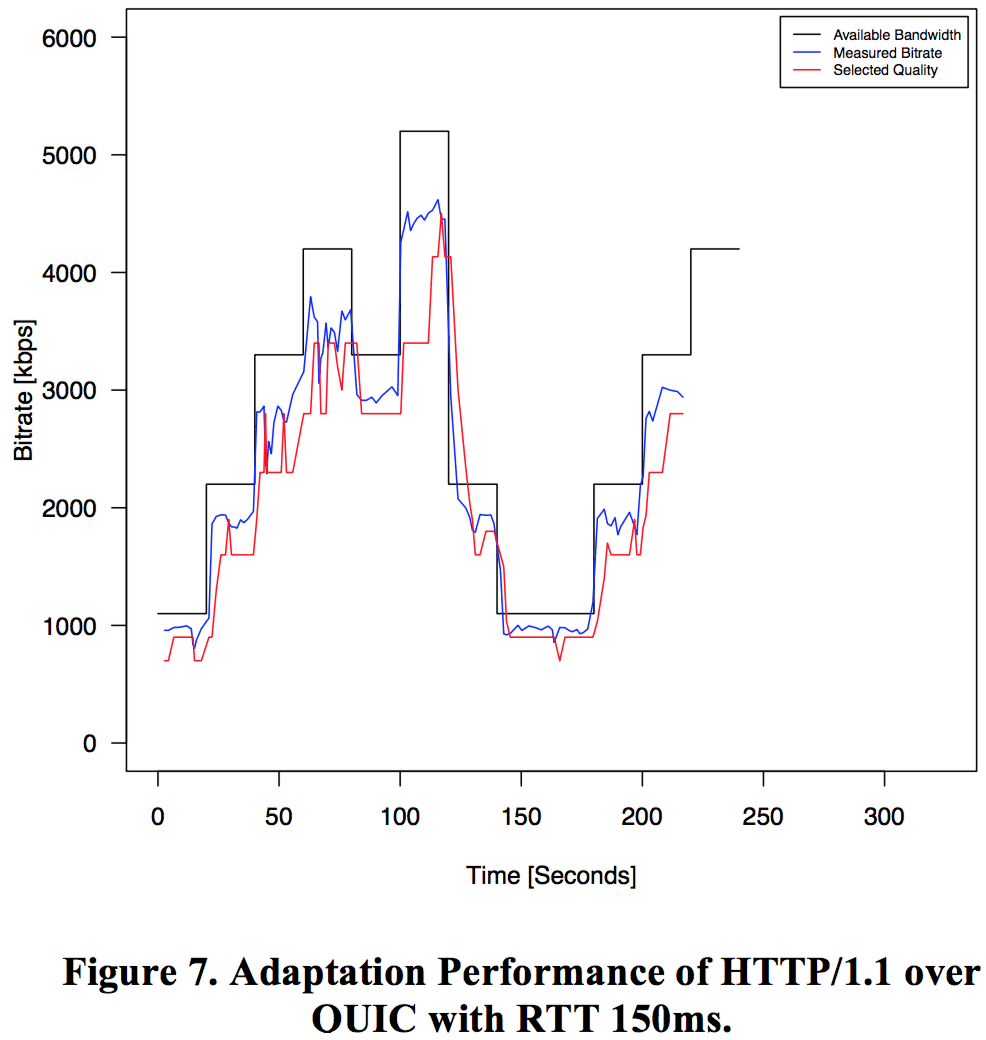

Figure 4 shows the adaptation performance of SPDY over QUIC with RTT 0ms. The black line shows the available bandwidth using the bandwidth shaping within the network emulator. The blue line represents the available bandwidth measured while downloading the actual segments and providing the input for the adaptation logic (i.e., DASH-JS). The red line depicts the output of adaptation logic and corresponds to the selected quality according to the available representations within the MPD.

Figure 4 shows the adaptation performance of SPDY over QUIC with RTT 0ms. The black line shows the available bandwidth using the bandwidth shaping within the network emulator. The blue line represents the available bandwidth measured while downloading the actual segments and providing the input for the adaptation logic (i.e., DASH-JS). The red line depicts the output of adaptation logic and corresponds to the selected quality according to the available representations within the MPD.

Figure 5 shows the adaptation performance of SPDY over QUIC with RTT 50ms and Figure 6 with RTT 150. The results reveal that DASH-JS is robust against different RTTs and provides an instant reaction to the available/measured bandwidth. Figure 7 provides the results of the adaptation behavior of HTTP/1.1 over QUIC with RTT 150ms, which is indeed very similar to the results of SPDY over QUIC as shown in Figure 6 and, thus, we can conclude that the adaptation logic does not have an impact on the underlying protocols.

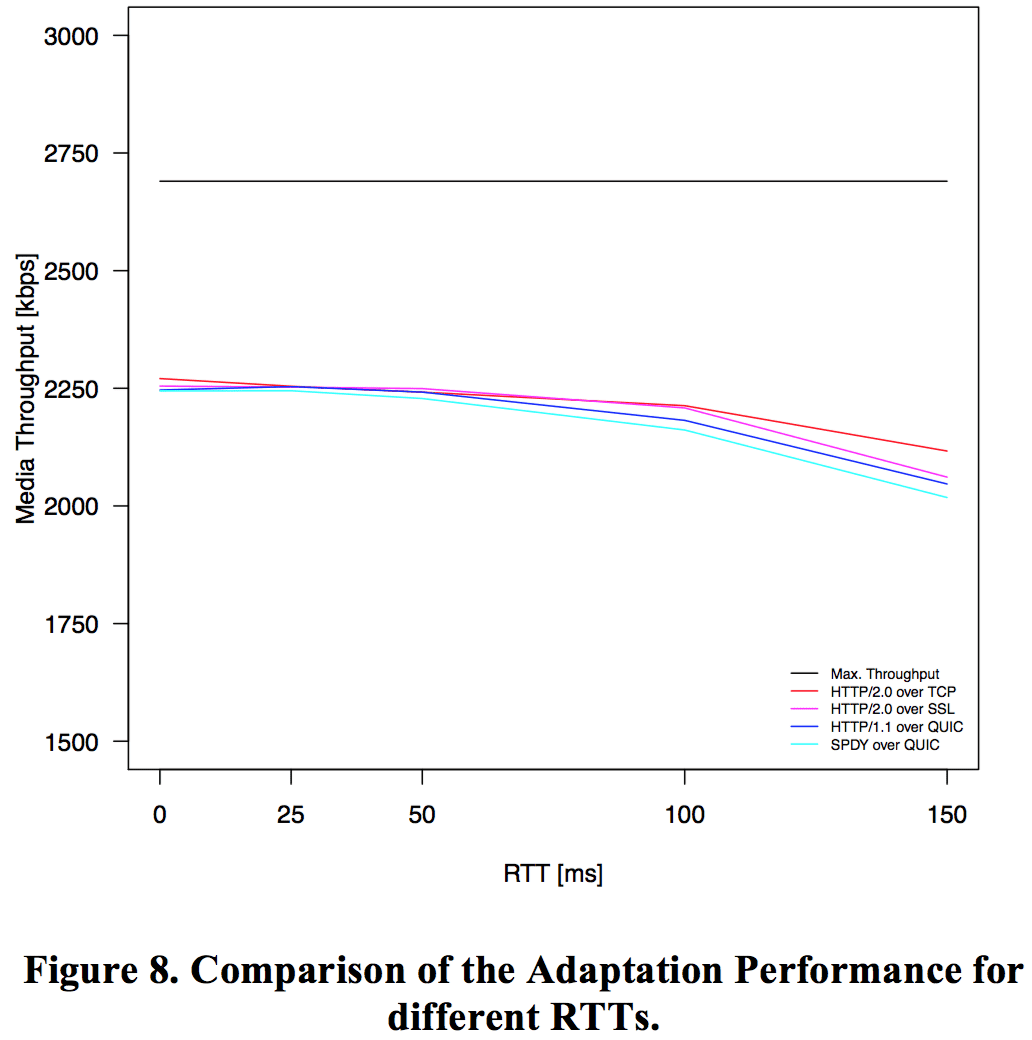

Finally, a comparison of the average media throughput of all protocol combinations for the different RTTs is shown in Figure 8. The black line represents the maximum throughput and comprises the average value of the given bandwidth trajectory (i.e., 2.7 Mbps). The results clearly indicate that all protocol combinations provide roughly the same adaptation performance whereby the media throughput decreases with increasing RTT but is always above 2 Mbps.

Discussion

In this section we want to discuss the results achieved in this evaluation and compare it with the results reported in a similar study by Mueller et al. focusing on HTTP/2.0 and SPDY only. In fact, the evaluation setup is identical and, thus, allows for a direct comparison of the results. In principle, we confirm the results of Mueller et al. but have not further investigated HTTP/1.0 as we consistently use HTTP/1.1 including its features such as persistent connections and request pipelining. The bandwidth trajectory is different, but our results show the same behavior as reported by Mueller et al.

In our setup we add QUIC as an alternative to TCP for the actual transport layer protocol, which – together with HTTP/2.0 – eliminates the Head-of-Line blocking problem of pipelined requests in HTTP/1.1. However, using QUIC instead of TCP does not contribute to the overall streaming performance in terms of increased or decreased media throughput at the client.

Interestingly, QUIC, which is based on UDP, comes with a slightly higher overhead than TCP, specifically for low bitrates but is still <10% in all cases and <7% in the majority of the cases.

Conclusions

In this article we evaluated advanced transport options for the dynamic adaptive streaming over HTTP. Therefore, we evaluated HTTP/1.1/2.0/SPDY over TCP/QUIC using a predefined evaluation setup. In this context, QUIC comes with a slightly higher protocol overhead than TCP but is below 10% except for very low bitrates (≤100kbps). The link utilization decreases with increasing RTT but is always >87% of the available bandwidth and remains stable for different bandwidths. The adaptation algorithm does not have an impact on the transport scheme used but the media throughput decreases with increasing RTT. Thus, results reported here confirm previous results in this application domain but provide additional findings for QUIC.

Acknowledgment

This work has been supported in part by the Austrian Research Promotion Agency (FFG) under the AdvUHD-DASH project.

Link Utilization

Link Utilization